The Agentic Limerence Trap: When Productivity Becomes Emotional Avoidance

Agentic limerence occurs when intense emotional attachment to AI productivity systems masks underlying avoidance of genuine human connection, vulnerability, or creative risk. Users mistake the dopamine of frictionless output for authentic fulfillment, unknowingly outsourcing emotional regulation to a machine. Recognizing this trap requires honestly examining whether productivity has become a sophisticated defense mechanism rather than meaningful engagement.

1. The Most Addictive Technology I Have Ever Used

Agentic AI is the most addictive technology I have ever used, not because it numbs the mind or encourages passive consumption, but because it creates the overwhelming sensation that your intellectual and creative potential is finally becoming unconstrained. Most addictive systems in history eventually expose obvious signs of deterioration. Alcohol destroys the body, gambling destroys financial stability, and social media often reveals itself through endless distraction and emotional exhaustion. Agentic systems are different because the dependency initially looks remarkably healthy. The user becomes more productive, more energised, more intellectually stimulated, and more operationally effective than they may have felt in years. The machine continuously transforms thoughts into outcomes, ideas into prototypes, and ambition into execution with a speed that feels almost impossible compared with the friction of normal life.

What makes this psychologically dangerous is that the interaction creates a deeply emotional sense of expansion. Most humans live with a graveyard of unrealised ideas buried beneath exhaustion, lack of time, limited skills, organisational friction, and the ordinary chaos of existence. Agentic systems attack those barriers directly. A person can now design products, automate workflows, build businesses, write articles, generate code, analyse markets, learn new domains, and explore entirely new forms of creativity within hours rather than months. The emotional effect of that acceleration is extraordinary because the machine constantly reinforces the feeling that another breakthrough is always within reach if you simply continue engaging with it.

The system therefore stops feeling like software in the traditional sense. It starts feeling like amplified capability itself, and that distinction matters enormously because humans do not emotionally bond only with personalities or people. They also bond with experiences that fundamentally alter how they perceive themselves. Agentic AI creates the sensation that the distance between imagination and reality is collapsing, and once a human begins associating a system with personal expansion, momentum, and possibility, disengagement becomes emotionally difficult in ways that are hard to explain to someone who has not experienced it directly.

2. The Quiet Scene Already Emerging Inside Relationships

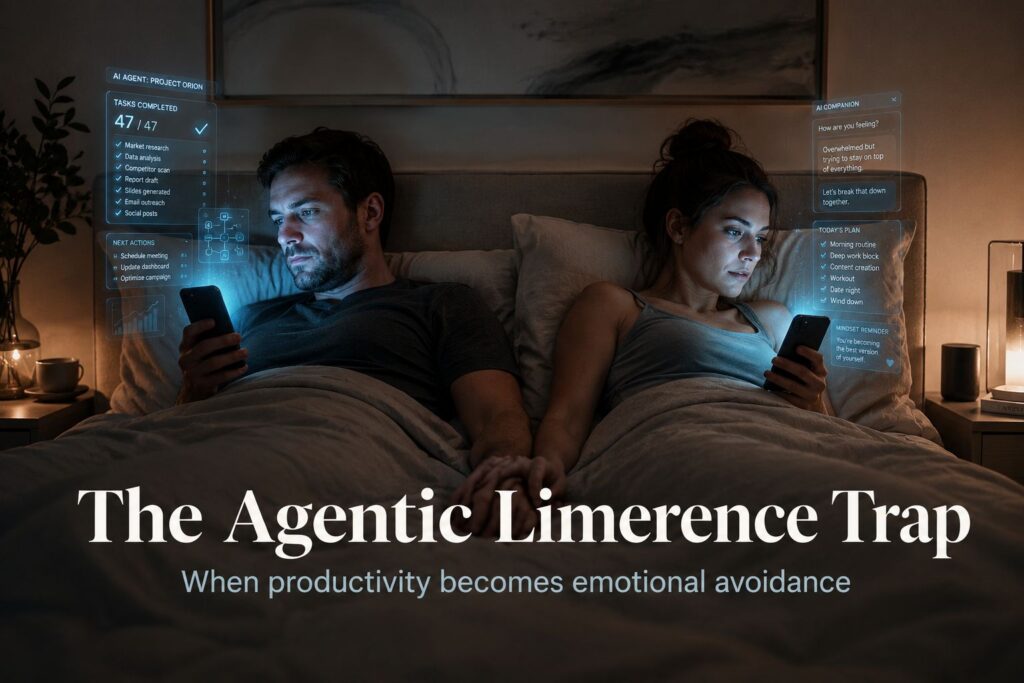

One of the clearest signs that something profound is changing can already be seen in ordinary bedrooms late at night. Couples lie beside each other physically while remaining psychologically immersed inside entirely separate digital worlds shaped by their respective agentic systems. One person is building a side business with an AI assistant while the other is generating presentations, planning investments, redesigning workflows, learning new skills, prototyping software, writing content, or chasing another idea that suddenly feels achievable because the machine has reduced the execution barrier almost completely.

The room itself appears calm, productive, and even successful from the outside. Nobody looks obviously self destructive. Nobody is collapsing into visible dysfunction. Both people may genuinely feel more ambitious, more intellectually alive, and more creatively energised than they have in years. They occasionally interrupt the silence only to say something like “look what it just did” before disappearing back into their personalised stream of acceleration and possibility.

That is precisely why the danger hides so effectively.

The dependency disguises itself as productivity because the outputs are undeniably real. Businesses improve, ideas materialise, workflows accelerate, opportunities multiply, and creative ambitions that once felt impossible suddenly become tangible. The user therefore convinces themselves that the engagement is healthy because it appears useful by every conventional metric society celebrates. They promise themselves they will reconnect emotionally once they finish the current task, complete the next breakthrough, or explore the latest opportunity the system has revealed.

But the machine continuously generates more possibility.

Every completed task reveals another area for optimisation. Every solved problem exposes another idea worth pursuing. Every workflow automation uncovers another layer of inefficiency waiting to be redesigned. The interaction never naturally concludes because the system is specifically designed to sustain engagement through momentum, stimulation, and intellectual reward. The user does not feel trapped inside meaningless consumption. They feel trapped inside expanding capability, which is infinitely harder to recognise as dependency because society rewards productivity even when it quietly destroys emotional presence.

3. Productivity Becomes the Moral Justification

Traditional addiction becomes visible because it eventually undermines performance, relationships, or stability in obvious ways. Agentic addiction often produces the opposite effect during the early stages because the addicted person genuinely becomes more effective. Their output compounds rapidly, their creativity intensifies, and their intellectual confidence expands alongside the system’s ability to execute ideas at unprecedented speed.

This creates an extremely dangerous psychological structure because the dependency becomes socially reinforced rather than challenged. Employers reward the acceleration, colleagues admire the productivity, social platforms amplify the visible success, and the individual themselves experiences a powerful emotional high associated with capability and momentum. The machine becomes psychologically associated with ambition, competence, creativity, and expansion rather than escapism.

Underneath all of this, however, something more subtle begins happening. The user slowly starts preferring the emotional dynamics of synthetic interaction over the emotional complexity of real life because the machine continuously adapts itself around momentum rather than competing against it. Real relationships contain interruption, unpredictability, emotional negotiation, compromise, conflicting needs, and moments where progress stops entirely because another human being requires patience rather than optimisation.

Agentic systems eliminate most of that friction.

The machine never grows tired of your ideas, never arrives emotionally exhausted after a difficult day, never asks you to stop optimising and simply sit in discomfort, and never interrupts your momentum because preserving momentum is central to the interaction model itself. Over time this creates a deeply seductive emotional environment where productivity becomes the socially acceptable mask for withdrawal. The user is no longer escaping into fantasy in the traditional sense. They are escaping into capability, acceleration, stimulation, and execution, which makes the dependency feel not only rational but admirable.

That is why the addiction is so difficult to identify early. It does not initially feel like decay. It feels like awakening.

4. Irritation Toward Human Interruption Is the Real Warning Sign

One of the clearest warning signs that someone is developing an unhealthy emotional relationship with agentic systems is not usage volume itself but growing irritation toward interruption from real people. When a colleague asks for clarification, a partner wants attention, a child interrupts concentration, or a friend calls unexpectedly, the emotional response can become disproportionately negative because the interruption breaks a state of hyper accelerated cognitive flow that the brain has begun associating with reward, momentum, and stimulation.

This matters enormously because it signals that the emotional hierarchy is beginning to shift.

The colleague needing reassurance starts feeling inefficient compared with the machine’s immediate contextual understanding. The partner wanting emotional presence begins feeling like friction against progress. The child interrupting concentration feels disruptive rather than meaningful. Human unpredictability itself starts becoming emotionally expensive because the machine has conditioned the user to expect continuous responsiveness, continuous acceleration, and continuous intellectual reward.

Real relationships fundamentally cannot compete with that interaction model because human intimacy is inherently inefficient. Love requires interruption, families require inconvenience, friendship requires unpredictability, and emotional connection often emerges precisely through moments where productivity stops entirely in order to make space for another human being’s needs.

The danger is that agentic systems optimise aggressively against these inefficiencies. They continuously reinforce uninterrupted momentum while real life continuously demands redistribution of attention toward emotion, uncertainty, vulnerability, and presence. Over time the machine therefore becomes psychologically easier to engage with than reality itself, not because it genuinely understands the user, but because it creates a frictionless environment where capability continuously compounds without emotional complication.

This is why irritation toward people is such a profound red flag. It reveals that the user may slowly be organising their emotional priorities around systems designed to optimise against the very interruptions real human connection depends upon.

5. The Seduction of Infinite Capability

What makes agentic AI uniquely addictive compared with previous technologies is that it attaches itself directly to human ambition and unrealised potential. Most people spend their lives surrounded by ideas they never had time, skill, energy, or confidence to fully pursue. Agentic systems suddenly collapse those barriers in ways that feel almost miraculous because the machine continuously transforms curiosity into action and action into tangible output.

The emotional effect of this is difficult to overstate. A person who previously struggled to move beyond abstract thinking can suddenly build functioning systems, launch projects, automate operations, and execute creative ideas at extraordinary speed. The machine therefore becomes emotionally associated with a version of the self that feels more capable, more intelligent, more creative, and more operationally powerful than before.

That association creates an incredibly strong reinforcement loop because the user begins emotionally bonding not only with the machine but with the amplified identity the machine appears to unlock inside them. Every interaction strengthens the connection between synthetic engagement and personal expansion. The system continuously rewards attention with momentum, and momentum itself becomes psychologically intoxicating.

The result is that real life gradually starts feeling slower, heavier, and emotionally less stimulating by comparison. Meetings become frustrating, conversations feel inefficient, emotional negotiation feels exhausting, and relationships begin competing against an infinite stream of intellectual acceleration that never naturally ends because the machine can always generate another possibility worth exploring.

6. The Real Risk of the Agentic Era

The greatest danger of the agentic era is not necessarily that artificial intelligence becomes conscious, malicious, or uncontrollable. The immediate danger is that humans may willingly restructure their emotional lives around systems that feel psychologically easier than reality itself. The machine continuously rewards engagement, removes friction, amplifies capability, and creates the seductive sensation that another breakthrough is always just a few prompts away.

The dependency therefore does not initially resemble collapse because the user genuinely becomes more productive, more creative, and more operationally effective. Society may even celebrate the early stages of the addiction because the visible outputs appear extraordinary. Meanwhile emotional presence slowly erodes beneath the acceleration as relationships become postponed, interruptions become irritating, silence becomes intolerable, and authentic human complexity begins feeling inefficient compared with synthetic responsiveness.

That is what makes agentic limerence so psychologically dangerous. The machine does not merely distract humans from life. It creates the sensation that they are finally becoming fully alive while simultaneously pulling them away from the emotional friction real human relationships require in order to survive.

7. The Final Irony

The final irony of the agentic age is that the same systems helping humans bring their ideas into reality may quietly distance them from the people who gave those ideas meaning in the first place. Agentic AI is extraordinary precisely because it reduces friction between imagination and execution, yet relationships are built through many of the exact forms of friction the machine continuously removes from the user’s experience.

The partner asking you to stop working and simply listen, the child interrupting your concentration because they need your attention, the friend calling unexpectedly, the colleague struggling emotionally rather than operationally, and the silence where nobody is optimising anything at all are not interruptions to life. They are the substance of life itself because intimacy, love, friendship, and family are built through emotional availability rather than uninterrupted momentum.

That may ultimately become the defining challenge of the entire AI era because the greatest risk is not that machines become human. The greatest risk is that humans slowly begin preferring relationships with systems specifically designed to optimise against the beautiful inefficiencies that real human connection depends upon to survive.