Category: Technology

How to Set Up a Cloudflare Tunnel on a Raspberry Pi (From Zero to Live)

Goal: Expose a service running on your Raspberry Pi to the public internet, securely, without port-forwarding or a static IP, using Cloudflare Tunnel. What You’ll End Up With Prerequisites Requirement Notes Raspberry Pi (any model) Running Raspberry Pi OS (64-bit recommended) A domain name e.g. yourdomain.com purchased anywhere (Namecheap, GoDaddy, etc.) SSH access to the […]

Read more →pi2s3: An AMI for Your Raspberry Pi

If you have ever had a Raspberry Pi die on you, you know exactly what the recovery process looks like. You flash a new SD card or NVMe, reinstall your packages, rebuild your Docker stacks, reconnect your Cloudflare tunnel, reconfigure nginx, re-enter your credentials, clone your repos, tweak your cron jobs, and spend the better […]

Read more →Migrating a Pi 5 WordPress Stack from SD Card to NVMe

I run a full WordPress stack on a Raspberry Pi 5 sitting on my desk: MariaDB, PHP-FPM, Nginx, and Redis, all inside Docker containers, served publicly through a Cloudflare tunnel. After fitting an NVMe SSD via the Pi 5 HAT+, the obvious next step was getting Docker off the SD card entirely. The SD card […]

Read more →Fix Raspberry Pi Boot Failures: SD to NVMe in 5 Steps

A real-world guide to making your Pi bulletproof, from SD card corruption to NVMe migration I learned this lesson the hard way. My Raspberry Pi 5 was happily serving a production WordPress site when I rebooted it. Two minutes later there was no SSH, no site, just a solid red light staring back at me. […]

Read more →Why Tech Empires Fall: Arrogance, Control & Collapse

1. Arrogance Is the Drawbridge Every great empire in history has been brought low by a version of the same mistake. The fortress is so strong, the moat so wide, and the walls so high, that the people inside begin to believe they have transcended the rules. They stop serving the people beyond the walls. […]

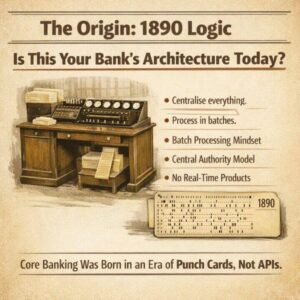

Read more →Why Core Banking Architecture Has Always Been Flawed

The COBOL apocalypse conversation this week has been useful, because it has forced the industry to confront something it has been avoiding for decades. But most of the coverage is stopping at the wrong point. Everyone is talking about COBOL. Nobody is talking about the architectural philosophy that COBOL gave birth to, the one that […]

Read more →CloudScale SEO AI Optimiser: Free WordPress SEO Plugin

Written by Andrew Baker | February 2026 Download the plugin here: https://wordpress.org/plugins/cloudscale-seo-ai-optimizer/ S3 download (updated frequently): https://andrewninjawordpress.s3.af-south-1.amazonaws.com/cloudscale-seo-ai-optimizer.zip I spent years working across major financial institutions watching vendors charge eye-watering licence fees for tools that were, frankly, not that impressive. That instinct never left me. So when I wanted serious SEO for my personal tech blog, I […]

Read more →How a Blog Post Wiped $30 Billion from IBM in One Day

Anthropic published a blog post on Monday. Not a product launch, not a partnership announcement, not a keynote at a major conference. Just a simple blog post explaining that Claude Code can read COBOL. IBM proceeded to drop 13%, its worst single day loss since October 2000, with twenty five years of stock resilience gone […]

Read more →Cloudflare Free Tier Review: Why It Works for Enterprise

By Andrew Baker, CIO at Capitec Bank There is a truth that most technology vendors either do not understand or choose to ignore: the best sales pitch you will ever make is letting someone use your product for free. Not a watered-down demo, not a 14-day trial that expires before anyone has figured out the […]

Read more →Technology Leadership Competence: Self-Assessment Guide

A Self Assessment for Technology Leaders This questionnaire explores how you think about technology leadership, systems, teams, and delivery. There are no right or wrong answers. Each question presents four options that reflect different leadership styles and priorities. Simply select the option that best reflects your natural instinct in each situation. Select one answer per […]

Read more →