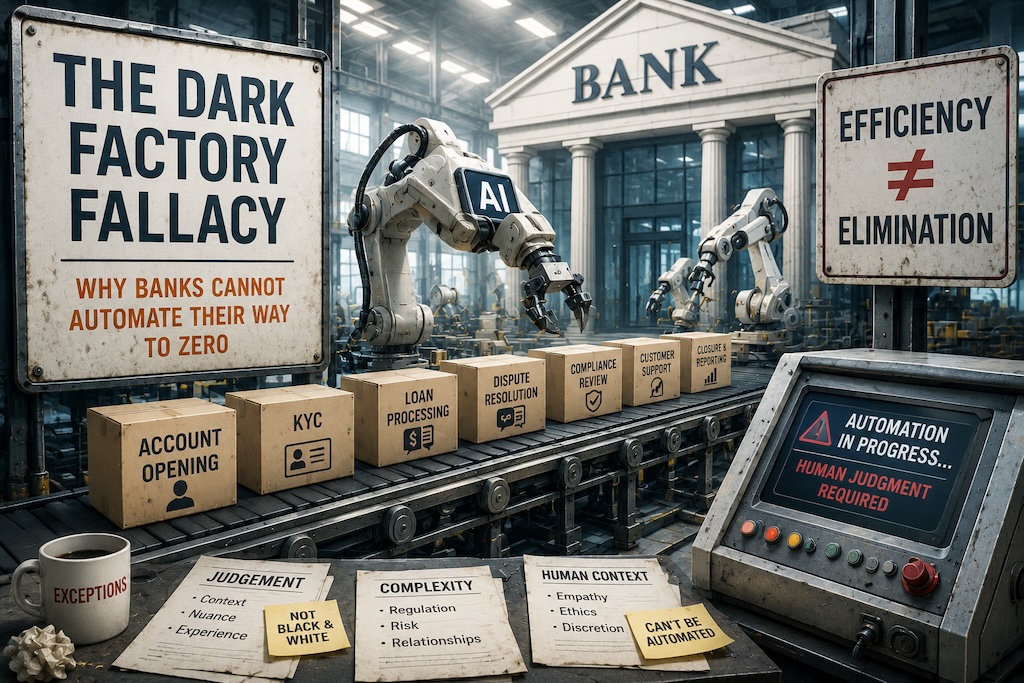

The Dark Factory Fallacy: Why Banks Cannot Automate Their Way to Zero

Operational complexity, not technological immaturity, prevents banks from achieving true dark-factory automation. Financial transactions involve regulatory judgment, contextual ambiguity, and counterparty unpredictability that resist deterministic programming. Unlike manufacturing components with fixed tolerances, banking products mutate continuously through human behavior, legal interpretation, and market volatility, ensuring meaningful human oversight remains structurally irreplaceable regardless of automation investment.

Why the most powerful operational model in financial services already exists, almost nobody is copying it, and the people who should be leading the charge are the biggest obstacle to it.

1. What a Dark Factory Actually Is

In advanced manufacturing, a dark factory is a facility that runs without human beings on the floor. The lights are off because nobody is there. Robots load, weld, assemble, test and package without supervision, without shift changes, without lunch breaks. The Fanuc plant in Yamanashi, Japan has been operating with minimal human intervention since the late 1990s, producing robot components with robots. The machines even perform their own maintenance scheduling.

The reason this works is deceptively simple: the product is deterministic. A motor housing has defined tolerances. A weld has a specified heat profile. Every input, every transformation, every output can be specified precisely enough that a machine can execute it without ambiguity. There is no judgement required because all the judgement happened upstream, during engineering and design.

So the question worth asking is: why has no large bank actually pulled this off at scale? And the more uncomfortable question underneath it: do banks even understand what is stopping them?

2. The Category Error Banks Keep Making

When banks talk about automation, they almost always mean one of two things. The first is robotic process automation applied to high volume, low complexity tasks: screen scraping, form filling, data extraction from PDFs, things that a rule engine can handle. The second is AI applied to decision support, which means fraud scoring, credit risk modelling, document classification. Both of these are genuinely useful. Neither of them produces a dark factory.

The category error is treating automation as a volume problem when the actual problem is architectural. Banks are not primarily in the business of processing deterministic transactions. They are in the business of handling exceptions. Every policy has an edge case. Every rule has a jurisdiction where it does not apply cleanly. Every customer interaction has a context that makes the standard response wrong. The volume work is increasingly automated. The hard work, the expensive work, the work that creates liability, lives in the gap between what the system expects and what actually happens.

When you automate a high volume process and hand off exceptions to a human team, you have not reduced headcount. You have reclassified it. The operations team that used to process the volume now handles only the exceptions the automation could not manage, which means they are working on the hardest cases all day with no easy wins to balance the cognitive load. Most automation projects quietly create this dynamic and never measure it.

3. Where Headcount Actually Lives

There is a useful exercise: ask a bank executive to draw the org chart of a fully automated operation and explain where people sit. The honest answer, the one they rarely give out loud, is that people sit in the exception path. They review the edge cases the rules engine escalated. They approve the transaction that fell outside the policy boundary. They investigate the alert that did not close automatically. They call the customer whose identity verification failed three times.

This is not a criticism. It reflects a genuine truth about how financial services organisations are designed. The Basel III and IV frameworks, FATF guidance, and every local regulatory regime in every market assume that a human being is ultimately accountable for consequential decisions. That assumption is embedded in policy, in audit frameworks, in employment contracts, and in the mental model of every risk committee that has ever sat around a table. The human in the loop is not an inefficiency. It is, by design, a compliance requirement.

Which raises an obvious question: if the regulatory architecture requires human accountability, and if the complexity of financial products generates continuous exceptions, then what is the realistic path to operational models that do not grow headcount linearly with volume?

4. The Model That Actually Works

The answer gets concrete here, because the model already exists. It is not theoretical. It is running in production in a small number of technology organisations, including some inside financial services, and it does not look like automation at all from the outside.

The model is engineering for fungibility rather than automation for volume. A service built for fungibility has the following properties. The business logic is fully captured in code, with no institutional knowledge living in someone’s head or in an undocumented spreadsheet maintained by a single analyst. The deployment pipeline is fully automated, including testing, environment promotion, and rollback. The documentation describes not just how the system works but why every significant design decision was made, what alternatives were considered, and what constraints shaped the outcome. Any competent engineer, arriving with no prior context, can read the documentation, understand the service, make a change, run the tests, validate the deployment, and move on to something else.

The result is a service with zero assigned headcount. Not because it never needs human attention, but because it does not need a dedicated human. The attention it requires is episodic, context free, and transferable. This is operationally indistinguishable from a dark factory for most practical purposes. The lights are not literally off. But no one is watching the lights.

5. Why Is This Not Already Obvious?

It should be. The ingredients are not exotic. Good documentation, automated testing, clean deployment pipelines, and clear architectural decision records are not cutting edge ideas. They are the baseline practices of any mature engineering organisation. So why are they so rare at scale inside large banks?

The first answer is cultural. Most large financial institutions were not built by engineers. They were built by bankers who later hired engineers to automate processes that bankers had already designed for human execution. The engineering culture that would naturally produce fungible, documented, autonomously operable services was always downstream of a business culture that measured capability in terms of people assigned to problems.

The second answer is incentive structures. In most large organisations, a team leader’s influence, budget, and career trajectory are directly correlated to the number of people they manage. Headcount is not just an operational resource. It is a proxy for organisational status. A leader who runs a team of forty has more power than a leader who runs a team of four, even if the team of four operates three times as much infrastructure with better reliability. The incentive to accumulate headcount is structural and largely invisible to the people experiencing it.

Look closely enough and a pattern emerges that nobody in a large organisation is incentivised to measure. Headcount and output are inversely correlated. The teams producing the most are almost always the smallest. They move faster, make decisions without committees, carry full context in their heads, and have no coordination overhead to absorb. The teams producing the least are large, slow, and structurally committed to the idea that their size reflects their importance. They are also, without exception, the teams with the most complex operating models, the longest approval chains, and the most elaborate descriptions of why their work cannot be simplified.

Complexity and brilliance are equally inversely correlated. A brilliant solution is almost always a simple one. It reduces the problem to its essential components and solves those directly. Complexity is what accumulates when a series of mediocre solutions are layered on top of each other over time, each one added to handle an edge case the previous solution could not manage, none of them ever removed. The team that owns that complexity becomes expert in navigating it and mistakes that expertise for value. But the expertise they have developed is expertise in a problem they collectively created. It is not the same thing as engineering ability and it should not be rewarded as though it were.

The performance review system makes this worse. The teams that are large, slow, and complex tend to give exceptional performance ratings to roughly half their members, because in the absence of genuine output metrics, performance becomes a political negotiation and political negotiations reward loyalty and tenure rather than contribution. The result is that the organisation’s most generously reviewed people are often in its least productive teams, and the engineers doing unnatural things with one headcount in four months are evaluated on a curve designed for a completely different kind of work.

The third answer is risk aversion applied incorrectly. There is a legitimate argument that critical financial systems require dedicated expertise and continuity of knowledge. But this argument gets extended, usually without examination, to services that are not actually critical, are not actually complex, and do not actually require continuity of human ownership. The risk argument becomes a reflex rather than an analysis.

6. The Interception Problem

Now add AI into this environment and watch what happens. AI should, in theory, accelerate the move toward fungible, low headcount operation. The promise is genuine: reasoning systems that can generate code, write documentation, model business logic, run compliance checks, and operate autonomously across a defined scope. In a clean environment, pointed at a clear target, with nothing in the way, the results are extraordinary.

But most large organisations are not clean environments. They are environments full of legacy functions looking for relevance, and AI projects are the most visible target available. The interception happens early, and it always sounds reasonable. Someone needs to first document all the existing processes before AI can be applied to them. Someone needs to redesign the workflows to make sure they are AI ready. Someone needs to ensure the project has proper governance, a steering committee, a phased roadmap, a business case, a risk assessment, a change management plan. The complexity is always described as greater than the AI team appreciates. The timeline is always longer than the AI team proposes. The dependencies always run through the functions that are doing the intercepting.

This is not sabotage in any conscious sense. It is organisational immune response. Legacy functions, whether they are process engineering, business analysis, program management, or CX design, understand at some level that AI threatens the justification for their existence. The rational response is to insert themselves into the AI project before the AI project demonstrates that they are not needed. If you can become the prerequisite for the AI work, you cannot be made redundant by it. You become the gatekeeper of your own replacement.

The tell is the language. “It is much more complicated than you think.” “You cannot just do things without planning.” “We need to first understand the as-is before we can design the to-be.” These are not analytical observations. They are delay tactics dressed in the vocabulary of rigour. The complexity they are describing is almost always the complexity they themselves introduced into the system over years of process accretion, and they are now positioning that complexity as an argument for their continued involvement in dismantling it.

7. The Hiring Freeze Revelation

The same dynamic plays out more quietly in headcount management, and the most revealing moment in any large organisation is the hiring freeze. Non technical leaders, when placed under one, often discover for the first time that the headcount they were accumulating was not actually necessary.

A freeze is imposed. A leader who had been requesting three additional people for six months finds that the work gets done anyway, that the existing team absorbs the scope, that the services continue to operate. The freeze removes the option to add headcount and the work adjusts to fit the resource that exists. The implication is uncomfortable. If a hiring freeze reveals that the headcount was not needed, then the headcount requests were not driven by operational necessity. They were driven by something else: status, comfort, the instinct to buffer against uncertainty with people rather than with process.

The leader was not lying about needing more people. They genuinely believed it. But the belief was wrong and nobody in the system was providing feedback that it was wrong, because the system rewards headcount accumulation rather than headcount efficiency. The only way to surface this is to remove the option. Organisations that want to move toward fungible, low headcount operation often need to treat hiring freezes as deliberate and sustained policy rather than as crisis response. This is counterintuitive enough that it almost never gets stated plainly, but the evidence for it is everywhere.

8. What Scorched Earth Actually Looks Like

There is a different approach entirely, and it requires a different mental model. When you truly attack a target, you take the inputs directly from the client and you get them where they need to be with nothing in the way. No process review. No as-is documentation. No steering committee. No phased roadmap. You remove every intermediary function and drive straight at the outcome. The path should be as close to zero friction as engineering and regulatory reality allow.

We rebuilt the entire internet banking platform of Capitec with one headcount in four months. Not a prototype. Not a proof of concept. A production system serving millions of clients. The system pen tests itself. It writes its own compliance documents. It understands exactly where PII data flows because it inspects them on the wire. Client chat is fully automated. Cash flow models run on geometric Brownian motion algorithms current enough to reflect actual financial modelling practice. There were no project managers involved, no business analysts, no business engineers, no process engineers, no CX practitioners, no UX designers. The system used the existing platform APIs to construct every asset it needed. It is an unnatural piece of engineering, and it was designed to be.

The point was not to prove a benchmark. The point was to find the edge, to understand what was actually possible when you removed every function whose primary contribution is coordinating between other functions, and replaced the entire coordination layer with a system that reasons about its own outputs. We found the edge. It is much further out than the interception culture would have you believe.

This is what the dark factory looks like in financial services. Not a facility with the lights off. A system with no coordinators, no gatekeepers, no legacy functions inserting themselves as prerequisites. Just inputs, a target, and the engineering to connect them directly.

9. What Zero Headcount Growth Actually Requires

Zero headcount growth is not a cost cutting target. It is an architectural outcome, and it requires an organisational stance that most banks are not yet willing to take.

Services must be designed so that their operational complexity does not grow with transaction volume, which means investing in observability, automated alerting, and self healing behaviour rather than in operations teams that respond to incidents manually. Knowledge must live in the system rather than in the people who built it, which means documentation treated as a first class engineering artefact and onboarding designed for a developer who has never seen the service before. The deployment pipeline must be owned by the team that writes the code, because every coordination step in a shared release process is a potential job. And AI projects must be protected from interception by legacy functions seeking relevance, which means leadership that is willing to name the pattern and refuse to accommodate it.

None of this is technically difficult. All of it is organisationally difficult. The neobanks that built this way, Nubank reaching 100 million customers with a technology team a fraction of the size of any traditional equivalent, Monzo designing for operational ownership from the first line of code, did not get there by automating legacy processes. They got there by refusing to build the legacy in the first place.

For incumbents the path is harder but the direction is the same. Find the targets where you can go scorched earth. Protect the teams doing it from the functions trying to intercept them. Measure output per person rather than people per output. And be honest about what the complexity arguments really are, because most of them are not about complexity at all. They are about the fear of becoming unnecessary, and that fear, however understandable, is not a reason to slow down.

Andrew Baker is Group CIO at Capitec Bank and writes about technology leadership, cloud infrastructure, and organisational design at andrewbaker.ninja and on Substack at @futureherman.