In technology, there is a tendency to solve a problem badly by using gross simplification, then come up with a catchy one liner and then broadcast this as doctrine or a principle. Nothing ticks more boxes in this regard, than the principle of least privileges. The ensuing enterprise scale deadlocks created by a crippling implementation of least privileges, is almost certainly lost on its evangelists. This blog will try to put an end to the slavish efforts of many security teams that are trying to ration out micro permissions and hope the digital revolution can fit into some break glass approval process.

What is this “Least Privileged” thing? Why does it exist? What are the alternatives? Wikipedia gives you a good overview of this here. The first line contains an obvious and glaring issue: “The principle means giving a user account or process only those privileges which are essential to perform its intended function”. Here the principle is being applied equally to users and processes/code. The principle also states only give privileges that are essential. What this principle is trying to say, is that we should treat human beings and code as the same thing and that we should only give humans “essential” permissions. Firstly, who on earth figures out what that bar for essential is and how do they ascertain what is and what is not essential? Do you really need to use storage? Do you really need an API? If I give you an API, do you need Puts and Gets?

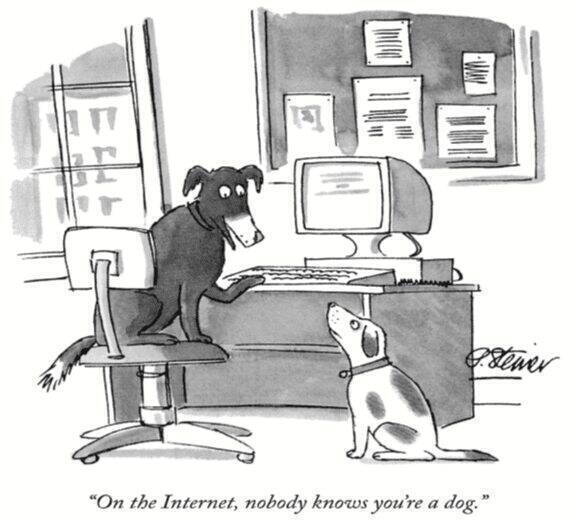

Human beings are NOT deterministic. If I have a team of humans that can operate under the principle of least privileges then I don’t need them in the first place. I can simply replace them with some AI/RPA. Imagine the brutal pain of a break glass activity every time someone needed to do something “unexpected”. “Hi boss, I need to use the bathroom on the 1st floor – can you approve this? <Gulp> Boss you took too long… I no longer need your approval!”. Applying least privileges to code would seem to make some sense; BUT only if you never updated the code and if did update the code you need to make sure you have 100px test coverage.

So why did some bright spark want to duck tape the world to such a brittle pain yielding principle? At the heart of this are three issues. Identity, Immutability, and Trust. If there are other ways to solve these issues then we don’t need to pain and risks of trying to implement something that will never actually work, creates friction and critically creates a false sense of security. Least Privileges will never save anyone, you will just be told that if you could have performed this security miracle then you would have been fine. But you cannot and so you are not.

Whats interesting to me is that the least privileged lie is so widely ignored. For example, just think about how we implement user access. If we truly believed in least privileges then every user would have a unique set of privileges assigned to them. Instead, because we acknowledge this is burdensome we approximate the privileges that a user will need using policies which we attach to groups. The moment we add a user to one of these groups, we are approximating their required privileges and start to become overly permissive.

Lets be clear with each other, anyone trying to implement least privileges is living a lie. The extent of the lie normally only becomes clear after the event. So this blog post is designed to re-point energy towards sustainable alternatives that work, and additionally remove the need for the myriad of micro permissive handbrakes (that routinely get switched off to debug outages and issues).

Who are you?

This is the biggest issue and still remains the largest risk in technology today. If I don’t know who you are then I really really want to limit what you can do. Experiencing a root/super user account take over, is a doomsday scenario for any organisation. So lets limit the blast zone of these accounts right?

This applies equally to code and humans. For code this problem has been solved a long time ago, and if you look

Is this really my code?