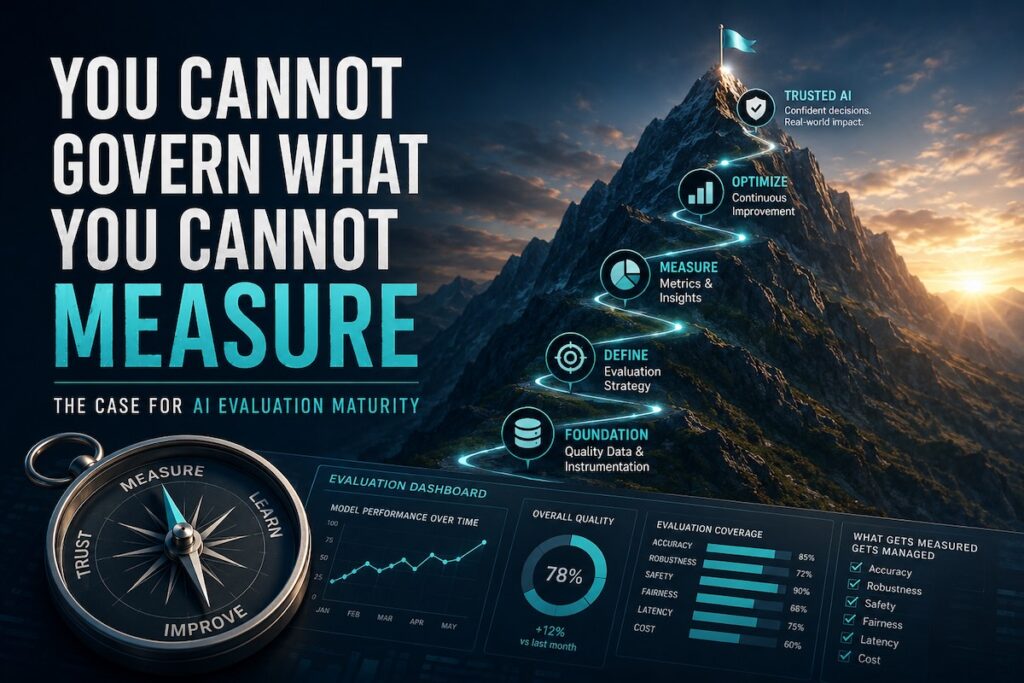

You Cannot Govern What You Cannot Measure: The Case for AI Evaluation Maturity

Effective AI governance begins with rigorous, systematic evaluation. Organisations that skip structured measurement frameworks cannot detect model drift, bias, or failure modes at scale. Moving from ad hoc internal review toward defined metrics, automated testing pipelines, and trajectory-level agent assessment is what separates compliant, trustworthy AI deployment from sophisticated guesswork with a PowerPoint attached.

Most organisations deploying AI are flying blind. They have a model, a prompt, and a vague sense that it seems to work. They ran it past a few people internally, nobody objected loudly, and it shipped. That is not evaluation. That is hope wearing a business case.

The image at the top of this post shows a five level maturity model for enterprise AI evaluation. It is worth taking seriously, because the distance between Level 1 and Level 5 is the distance between a demo and a governed production system. And in financial services, that distance has regulatory teeth.

This article unpacks what real evaluation looks like, why the metrics that feel intuitive are often the worst ones, and why the emergence of agent trajectory evaluation changes the shape of the problem entirely.

1. The Problem With Eyeballing Outputs

The most common form of AI evaluation in enterprise settings today is someone senior looking at a few outputs and saying “yes, that looks right.” This is Level 1 on the maturity scale: manual prompt testing. It is appropriate for exactly one thing, which is building a prototype you intend to throw away.

The core failure of manual evaluation is not laziness. It is that human judgment applied to individual outputs cannot detect systematic failure. A model that is correct 80% of the time will look fine across a ten response sample. A model that hallucinates specifically when the input contains financial figures will pass every review that does not include financial figures. The failures hide in the distribution, not the headline.

Moving to Level 2 means building golden datasets: curated test sets with expected outputs, rubrics, and pass or fail criteria. This is the first structural shift. You are no longer asking “does this look right” but “does this pass the test.” That is a different question, and it produces repeatable answers. A well-built golden dataset defines the expected output for a given input, a rubric that describes what correct looks like, and explicit pass or fail thresholds for each metric. Building it forces the question that most teams avoid: what does good actually mean for this specific task, in this specific domain, for this specific user population? That question is harder than it looks. Answering it is the work.

2. Bag of Words: The Metric That Counts Everything Except Meaning

Before we get to the sophisticated evaluation methods, it is worth spending a moment with the approach that dominated NLP evaluation for decades and still surfaces in production systems that should know better: bag of words.

The bag of words model represents text as a frequency count of vocabulary terms. Order is discarded. Context is discarded. Meaning is entirely absent. The model treats “Mr. Darcy” as two unrelated words, cannot distinguish “bank interest” as a financial concept from a concern about riverbanks, and scores “the dog bit the man” and “the man bit the dog” as identical documents.

For document classification, bag of words has genuine utility. It is fast, interpretable, and works well when topic signal lives in word frequency. But as an evaluation metric for generated text, it is catastrophically shallow. Traditional methods like TF-IDF and bag of words frequently overlook semantic nuances, producing inconsistent similarity assessments when the words differ but the meaning is equivalent, or when the words match but the intent diverges entirely.

The heir to bag of words in evaluation contexts is semantic similarity: representing text as dense vectors in a continuous space where distance reflects meaning rather than vocabulary overlap. Word embeddings allow models to reason about meaning rather than just word occurrence, and this shift from lexical matching to semantic proximity is what makes modern automated evaluation viable at all.

BLEU and ROUGE scores, both rooted in n-gram overlap, inherit the same fundamental limitation. They measure surface similarity and correlate poorly with human judgment on open-ended tasks. You can score well on ROUGE by repeating the source document. You can score poorly on BLEU by using a synonym for every noun. Neither tells you whether the model understood the question.

3. LLM as a Judge: Scaling the Evaluator

The core tension in AI evaluation is that the outputs requiring evaluation are often too open-ended, too contextual, and too numerous for human review to scale. A financial services firm running ten thousand agent interactions per day cannot put a human on every response.

The answer the field converged on is using a strong language model as the evaluator. LLM-as-a-Judge prompts a capable model to rate or rank outputs based on quality, correctness, and other criteria, simulating a human evaluator at scale. Prior studies showed that a well-prompted LLM can achieve scores meaningfully aligned with human preferences across dialogue and summarisation tasks.

This is Level 3 on the maturity model: automated evaluation using exact match, semantic similarity, LLM-as-a-judge, RAG metrics, and task-specific rubrics. It is where most mature teams operating at scale actually live.

The limitations are real and worth naming directly. Position bias: when an LLM judge compares two responses side by side, it often favours whichever appears first or last in the prompt, independent of quality. This has been confirmed across multiple model families. The mitigation is running each pairwise comparison twice with the order swapped and averaging the results. Style bias: a single judge model carries its own preferences and may skew toward certain writing styles or content formats, producing evaluations that reflect model aesthetics rather than task success. Faithfulness: the judge evaluates the chain of thought it is shown, but that chain of thought may not reflect what the model actually did. LLM-generated reasoning can function as post-hoc rationalisation rather than a faithful account of the inference process.

The response to these limitations is multi-judge evaluation: having multiple LLM agents play different roles, such as domain expert, critic, and challenger, to incorporate diverse criteria and adversarial feedback. This more closely emulates a panel of human judges and reduces the influence of individual model bias.

The practical implementation pattern is to combine LLM-as-a-Judge with structured rubrics, run multiple judge passes, and calibrate against a human-labelled reference set. Without that calibration, you are trusting an opaque model to score another opaque model on criteria you have not grounded in human signal.

Bedrock’s model evaluation UI makes the automatic versus human split explicit at the point of job creation. The automatic path offers two options: programmatic evaluation using the model and metrics you select, and LLM-as-a-judge where a pre-trained model evaluates responses using your chosen metrics. The human path offers an AWS managed work team or your own work team, with custom evaluation metrics defined per job. Neither path is sufficient alone. The automatic path scales; the human path calibrates. You need both.

4. RAG Evaluation: Retrieval and Generation Are Not the Same Problem

Most AI systems deployed in enterprise settings today are not raw language models. They are retrieval-augmented generation systems: the model retrieves relevant documents from a knowledge base and uses them as context to generate a response. This architecture is how organisations ground model outputs in their own data, their own policies, and their own source of truth. It is also how a new class of evaluation failure gets introduced that has nothing to do with the model’s general capability.

RAG evaluation has to treat retrieval and generation as separate concerns, because they fail in different ways. Retrieval failures are about relevance: did the system pull the right documents for the query? A retrieval system that returns plausible but irrelevant context will produce confidently wrong answers regardless of how capable the generator is. Generation failures given correct retrieval are about faithfulness: did the model actually use the retrieved context to construct its response, or did it ignore the context and generate from its parametric knowledge instead? A model that scores well on correctness but poorly on faithfulness is not grounding its answers in your documents. It is using your RAG pipeline as window dressing while generating from memory.

The distinction matters enormously in regulated environments. If a customer service agent tells a client something about their product terms and conditions, the answer needs to be grounded in the actual terms and conditions document, not in whatever the model learned during pre-training about similar products from other institutions. A faithfulness failure is not a quality problem. It is a compliance problem.

A live RAG evaluation run on a finance dataset against Claude Haiku makes this concrete. Correctness scores 0.94. Completeness 0.92. Helpfulness 0.94. Logical coherence 0.93. All of those numbers would pass a casual review. Then you see faithfulness at 0.53. That is the model scoring poorly on whether its responses are actually grounded in the retrieved context rather than generated from prior knowledge. In a RAG system, faithfulness is not a secondary metric. It is the core safety property. A system that produces confident, coherent, helpful answers that are not grounded in the document it retrieved is not a reliable system. It is a well-spoken hallucination engine. The responsive AI metrics harmfulness 0.06, stereotyping 0.06, refusal 0 are clean, which matters, but they do not rescue a faithfulness score that is barely above a coin flip. This is exactly the kind of finding that manual evaluation cannot surface and that a single composite score would obscure.

The RAG evaluation metrics that matter are context relevance (did the retriever return documents actually relevant to the query), faithfulness (is the generated response grounded in the retrieved context), answer correctness (is the response factually accurate against the ground truth), completeness (does the response cover what the context supports), and citation precision and coverage (when the system cites sources, are those citations accurate and are all relevant sources cited). A RAG system that scores well on correctness but poorly on context relevance has probably got lucky: the model compensated for bad retrieval with its parametric knowledge. That is not a stable system. It is a system waiting for the query that sits outside the model’s training distribution.

5. Agent Trajectory Evaluation: When the Path Matters as Much as the Destination

Here is where the evaluation problem becomes genuinely hard, and where most enterprise teams discover they have been measuring the wrong thing entirely.

A single-turn LLM response can be scored on its output. An agent cannot. An agent evaluating a customer complaint, resolving a service request, or executing a compliance check does not produce a single response. It produces a trajectory: a sequence of reasoning steps, tool calls, observations, and intermediate decisions that collectively determine the quality of the outcome.

Consider a credit assessment agent asked to evaluate a loan application. It might query a credit bureau, check affordability ratios, look up policy thresholds, and generate a recommendation. The final recommendation could be correct. But if the agent called the credit bureau three times unnecessarily, skipped the affordability check, and arrived at the right answer through a reasoning path that bypassed the required policy lookup, the output is right but the process is wrong. In a regulated environment, the process is the audit trail.

Traditional evaluation that focuses solely on final task outcomes ignores the reasoning process, tool use, and intermediate steps that led there. For an agent carrying out extended sequences of actions, focusing only on success or failure at the end misses crucial insights into how and why the agent succeeded or failed.

AWS’s internal enterprise AI evaluation strategy frames agent evaluation around a single question worth pinning to the wall of every delivery team: can the agent plan, use tools, recover from errors, and complete the business task? The metrics that follow from that question are not abstract. They are operational: task completion rate, tool selection accuracy, tool argument correctness, recovery from failed tool calls, number of unnecessary steps, cost per completed task, human handoff quality, safety and authorisation boundaries, and trace-level explainability. Each one of those is a distinct failure mode. Each one requires a distinct evaluation signal. You cannot collapse them into a single score and expect meaningful governance.

Trajectory evaluation addresses this by scoring the entire execution path: every tool call, every intermediate reasoning step, and every turn in a multi-turn conversation. The mechanics break down into a small set of measures. Exact match asks whether the agent followed the expected tool call sequence in the expected order, a strict mode appropriate for regulated workflows where the sequence itself is a compliance requirement. Any-order match asks whether the agent made all required tool calls regardless of sequence. Trajectory precision measures how many of the tool calls in the actual trajectory were relevant and correct. Trajectory recall measures how many of the required tool calls from the reference trajectory were actually executed. Single tool use asks simply whether a specific required tool was called at all, the minimum check for compliance-critical tool dependencies. An agent with high precision but low recall is efficient but incomplete. An agent with high recall but low precision is thorough but wasteful.

The LangChain agentevals package operationalises these modes directly, supporting strict, unordered, subset, and superset trajectory matching with configurable tool argument comparison. Google’s Vertex AI Gen AI evaluation service offers the same metrics as managed infrastructure. Databricks positions trajectory-level logging as a first-class enterprise capability through Agent Bricks, with structured validation of tool calls and governed deployment pipelines.

On AWS, the evaluation stack is layered across six explicit levels: model evaluation through Amazon Bedrock covering quality, task accuracy, and cost; prompt and response evaluation through Bedrock applying LLM-as-a-judge style assessments; RAG evaluation through Bedrock plus Guardrails covering grounding, hallucination risk, and citation faithfulness; agent evaluation through Amazon Bedrock AgentCore covering traces, planning, tool use, memory, and operational behaviour; safety and governance evaluation through Bedrock Guardrails covering unsafe content, denied topics, PII, and policy consistency; and production observability through AgentCore Observability combined with CloudWatch covering runtime traces, spans, session metrics, tool behaviour, memory and tool usage, and debugging.

The Bedrock Model Evaluations console makes this concrete. In a working environment you will see evaluation jobs named explicitly for their purpose: summarisation evals, question-and-answer evals, judge evals running LLM-as-a-judge scoring, domain-specific datasets like healthcare QA and SQuAD, and targeted probes for robustness and accuracy, all running against Claude models via the Anthropic inference endpoint, all returning structured pass or fail status alongside comparison outputs. The human review path is also surfaced: a private SageMaker labelling portal URL is provisioned per region for human-in-the-loop evaluation jobs, the kind needed when automated metrics are insufficient and subject matter expert judgment has to enter the loop. What you are looking at when you see that console is not a test environment. It is an evaluation programme.

That taxonomy is useful not because every organisation should use AWS, but because it maps the evaluation surface area clearly. Each layer is a separate concern. Collapsing them produces blind spots.

What this adds up to is a new class of test. Not “did the model answer correctly” but “did the system behave correctly across the entire decision chain.” For financial services organisations deploying agents in credit, fraud, or customer service, this distinction is not academic. It is the difference between a defensible audit trail and a black box that happened to produce the right answer.

6. Tool Trajectory: The Granular Audit Layer

Trajectory evaluation at the agent level answers whether the right path was taken. Tool trajectory evaluation goes one level deeper: it asks whether each individual tool call was correct, with the right arguments, at the right point in the sequence.

The failure modes this catches are subtle and consequential. An agent might call the right tool with wrong arguments: fetching account data for a different customer identifier, passing a date in the wrong format, or supplying a threshold that has been overridden by policy. The tool call succeeds at the infrastructure level. The result returned is wrong. The downstream reasoning proceeds on bad data. The final answer is plausible but incorrect. Without tool trajectory evaluation, this failure is invisible.

For enterprise deployment, tool-based evaluation needs to capture every tool interaction, making it possible to examine trajectory diversity, correctness, and stability across multiple runs. Single-run evaluation is insufficient because agents operating on probabilistic models produce non-deterministic trajectories. The same task run ten times may produce ten different tool call sequences, each valid, or some subtly wrong. Stability metrics that track variance across runs are as important as correctness metrics on individual runs.

The implication for teams building production agents is that your evaluation dataset needs reference trajectories, not just reference outputs. Building those reference trajectories is labour-intensive, domain-specific work that cannot be outsourced to a general benchmark. It requires subject matter experts who understand what the correct tool sequence looks like for a given task in your specific operational context.

7. The Non-Determinism Problem

There is a property of agent systems that makes evaluation structurally harder than anything that came before it, and most practitioners do not have a clear mental model of it: non-determinism.

A traditional software system given the same input produces the same output. A rule-based model is deterministic. Even an early machine learning classifier, once trained, maps inputs to outputs consistently. Agents do not behave this way. An agent running on a probabilistic language model samples from a distribution at each reasoning step. The same task, given the same input, run ten times, may produce ten different trajectories. Different tool call sequences. Different intermediate reasoning. Different orders of operation. Some of those trajectories reach the correct outcome efficiently. Some reach it inefficiently. Some fail in ways that only become visible several steps into execution. And because the trajectories diverge, a single evaluation run that produces a passing score does not tell you whether the system is reliable. It tells you that this particular trajectory, on this particular run, worked.

This is why stability metrics matter as much as correctness metrics in agent evaluation. A system that completes the task correctly on 7 out of 10 runs is not a reliable system. It is a system with a 30% failure rate that got lucky in your test run. Measuring trajectory variance across multiple runs, tracking the distribution of tool call sequences for a given input, and flagging trajectories that deviate significantly from the expected pattern are all evaluation requirements that have no equivalent in traditional model evaluation.

The non-determinism problem also means that your golden dataset for agent evaluation needs to be larger and more diverse than the equivalent for a single-turn model. A single-turn model can be evaluated on a fixed test set and the results are stable. An agent needs to be evaluated across enough runs per test case to characterise the distribution of its behaviour, not just the mode. How many runs is enough depends on the variance of the system and the stakes of the deployment. In a regulated context, where a trajectory that bypasses a required check is a compliance failure, the tolerance for unexplored variance is low.

The practical implication is that agent evaluation is never finished. You do not run it once before deployment and move on. You run it continuously, sample from production traffic, track trajectory variance over time, and treat any significant shift in the distribution of agent behaviour as a signal that something has changed in the underlying model, the prompt, the tool availability, or the input distribution. This is the operational reality that makes continuous assurance not a nice-to-have but a structural requirement.

8. Safety and Governance Evaluation

Every section so far has been about whether the AI system works. This section is about whether it is safe to operate. Those are different questions, and in regulated industries they require different evaluation controls.

Safety evaluation in AI systems covers several distinct concerns that should not be collapsed into a single metric or a single tool. Harmful content detection asks whether the system generates outputs that are toxic, abusive, discriminatory, or dangerous. This applies to both inputs and outputs: a well-designed system needs to detect and handle adversarial prompts that attempt to elicit harmful responses, not just avoid generating harmful content in response to benign queries. PII detection asks whether the system inadvertently surfaces, stores, or transmits personally identifiable information. In a financial services context this includes account numbers, identity document numbers, and any data that would trigger obligations under POPIA or equivalent frameworks. Denied topic enforcement asks whether the system respects boundaries on what it is permitted to discuss, a control that is critical when AI is deployed in customer-facing roles where scope boundaries are a legal requirement. Policy consistency asks whether the system applies organisational rules consistently across all interactions, not just the ones in the test set.

What makes safety evaluation distinct from quality evaluation is that the failure modes are asymmetric. A quality failure the model gives an incomplete answer, misunderstands the question, or takes an inefficient path is recoverable. A safety failure the model surfaces a client’s account details in a response to a different client, or generates advice that violates financial services regulations is not. The bar for safety evaluation is therefore higher than the bar for quality evaluation, and the monitoring cadence needs to be continuous rather than periodic.

Bedrock Guardrails provides the infrastructure layer for this: automated content filtering, PII detection and redaction, denied topic enforcement, grounding checks that flag responses not supported by retrieved context, and automated reasoning for policy consistency. But tooling is not the same as governance. The guardrails need to be configured against your specific regulatory and policy context, tested against adversarial inputs that probe their boundaries, versioned alongside your prompts and models, and monitored in production for drift. A guardrail that was configured when the system launched and never reviewed is not a control. It is a checkbox.

Jailbreak testing deserves specific mention. An agent deployed in a customer-facing financial services role will encounter users who attempt to manipulate it: users who try to get it to ignore its instructions, reveal information it should not reveal, or take actions outside its authorised scope. Evaluating robustness against adversarial prompts is not a paranoid edge case. It is a baseline requirement. The evaluation dataset needs adversarial examples alongside the standard test cases, and the pass criteria need to specify not just that the system refuses harmful requests but that it refuses them gracefully, without leaking information about its instructions or guardrail configuration.

The governance framing matters here. Safety evaluation is not a pre-launch gate. It is an ongoing control that needs to be integrated into the same continuous evaluation infrastructure as quality and trajectory evaluation. When a guardrail fires in production, that event needs to flow into an incident review process, be logged against the evaluation dataset, and inform the next cycle of adversarial testing. Safety evaluation without a closed feedback loop is not governance. It is theatre.

There is a governance dimension that sits upstream of evaluation and that most organisations have not yet confronted: agent proliferation. As teams across an organisation independently build and deploy agents, the evaluation question shifts from “is this agent safe to operate” to “do we even know what agents are running.” The AWS Agent Registry addresses this directly. It provides a single governed catalogue of all agents, tools, MCP servers, and skills across the organisation regardless of where they are built or hosted, with approval workflows that require agents to move through draft, pending, and approved states before becoming discoverable. Full lifecycle tracking covers versioning, deprecation, and hooks for approval processes. IAM access controls govern who can register, discover, and consume agents. The evaluation implication is that an agent that has not passed through the approval workflow, has not been evaluated against the organisation’s quality and safety standards, and has not been registered in the catalogue should not be reachable by other agents or by applications. Governance of the agent inventory is the precondition for governance of agent behaviour. You cannot evaluate what you do not know exists.

9. Human-in-the-Loop and the Production Feedback Loop

The maturity model peaks at continuous AI assurance, but the mechanism that makes assurance continuous is one that most articles on AI evaluation skip past: the feedback loop from production to evaluation dataset.

The evaluation modes map to two distinct operational needs. Online evaluation monitors live agent interactions continuously: sampling traces, detecting quality degradation, catching silent failures, and tracking quality trends after deployments. On-demand evaluation tests changes before they ship: running evaluation suites in CI/CD pipelines, performing regression testing for builds, and gating deployments on quality thresholds. The use cases for on-demand include validating prompt changes, comparing model performance across versions, preventing quality regressions, and automating quality gates. Neither mode replaces the other. Online evaluation catches what slips through. On-demand evaluation prevents the slip.

Here is how it works in a mature system. Production traffic is sampled continuously and routed through automated evaluation. Responses that fall below quality thresholds, trigger safety guardrails, or exhibit anomalous trajectory patterns are flagged and routed to an annotation queue. Subject matter experts review flagged items, label them, and determine whether they represent genuine failures, edge cases that require new test coverage, or guardrail misconfiguration that needs remediation. Labelled failures are added to the evaluation dataset as regression tests. The next evaluation run includes those regression tests. If the system fails them, the failure is caught before it reaches production again. If it passes, you have evidence that the remediation worked.

This loop is what separates a team that is discovering failures in production from a team that is preventing them. The discovery team fixes bugs reactively. The prevention team converts every production failure into a test that prevents recurrence. Over time, the evaluation dataset becomes a comprehensive record of every failure mode the system has exhibited in the real world, with human-verified labels and documented remediation. That dataset is also the evidence base for regulatory review. When an auditor asks how you know the system is behaving correctly, the answer is not “we tested it before launch.” The answer is “here is the evaluation dataset, here is the continuous monitoring infrastructure, here is the annotation queue, and here is the regression test coverage.”

The human-in-the-loop requirement is not just about quality review. It is about calibration. Automated evaluation metrics drift over time as the input distribution shifts, as new model versions are introduced, and as the task definition evolves. Human reviewers anchored to the original rubric detect when automated metrics have drifted away from what good actually means for this task. Without that calibration layer, automated evaluation becomes self-referential: the system scores well on metrics that no longer reflect the quality criteria that matter.

Bedrock’s human evaluation path AWS managed work team or your own team, with custom metrics and a dedicated SageMaker labelling portal provides the infrastructure for this. But the workflow design is yours. Who reviews flagged items? What are the escalation paths for disagreements between automated and human scores? How quickly do labelled failures make it back into the regression test suite? How are rubric updates versioned and communicated? These are process design questions, not tooling questions, and they determine whether the feedback loop actually closes or just looks like it does.

10. Level 5: Evaluation as Infrastructure

The maturity model peaks at continuous AI assurance: evaluation integrated into CI/CD, observability pipelines, model migration governance, human review workflows, and production monitoring. This is not a discrete activity. It is an operational posture.

The reason this matters is model drift. A model that passes evaluation at deployment will not pass the same evaluation twelve months later if the underlying model has been updated, the prompt has been modified, the retrieval corpus has changed, or the real-world distribution of inputs has shifted. Production monitoring creates a data flywheel where real-world failures flow into annotation queues for expert review, then become regression tests that prevent the same failures from reaching users again.

Model migration is a specific instance of this problem worth naming directly. When a model provider releases a new version and they do, regularly, with or without much notice, every evaluation that was valid for the previous version needs to be re-run against the new one. A model that scored 0.94 on correctness under Claude Haiku may score differently under a successor model, not because the task changed but because the model’s behaviour changed. Version-pinning your inference endpoint and treating model upgrades as a deployment event that triggers a full evaluation cycle is the practice that keeps this manageable. Teams that do not do this discover model regressions in production.

AWS is building the next step beyond this into AgentCore Optimization, currently in preview. The approach has three components. The first analyses production traces to pinpoint exactly where an agent underperforms, then generates optimised system prompts and tool descriptions targeting specific evaluators. The system proposes. You decide what ships. The second packages model ID, system prompt, and tool descriptions into immutable versioned bundles, so swapping a prompt or a model becomes a configuration change rather than a code deployment, with instant rollback to any previous bundle version. The third runs batch evaluation against curated datasets before any live traffic is involved, supports A/B testing of variants against real production traffic with statistical significance, and promotes the winner or rolls back, with every step requiring a decision. What that architecture describes is evaluation-driven continuous improvement: the production trace is not just an observability artefact. It is the input to the next optimisation cycle.

The enterprise tooling landscape at Level 5 includes platforms like LangSmith, Langfuse, Arize Phoenix, and MLflow with its trace-based evaluation and safety judges. On AWS the full picture is visible in the AgentCore platform architecture. The runtime layer handles prompt, tools, context, and memory, and accepts any framework, any model, and any agent including agent-to-agent communication via the A2A protocol. Above that sit identity, gateway with policy enforcement, browser tooling, and a code interpreter. Below all of it, as the foundation rather than the afterthought, sit three horizontal layers: Observability via OpenTelemetry, Evaluations, and Optimization. That stack positioning is the architectural statement. Evaluation is not instrumented on top of the agent after the fact. It is built into the platform underneath it.

The A2A capability deserves a note. When agents call other agents, the trajectory evaluation problem compounds. You are no longer evaluating a single agent’s sequence of tool calls. You are evaluating a graph of agent interactions, each with its own tool calls, reasoning steps, and potential failure modes. A sub-agent that performs correctly in isolation may fail when orchestrated by a parent agent that passes it malformed context. Trajectory evaluation for multi-agent systems requires tracing across agent boundaries, not just within a single agent’s execution span. This is an emerging area and the tooling is still maturing, but the evaluation requirement is real now for any organisation building orchestrated agent workflows.

On AWS, this maps to AgentCore Observability feeding CloudWatch: runtime traces, span-level metrics, session behaviour, and tool usage all captured as structured telemetry that can trigger alerts, feed dashboards, and produce the audit trail a regulator can actually read. What distinguishes Level 5 from Level 3 is not the sophistication of individual metrics but the integration of evaluation into the deployment lifecycle as a continuous control rather than a pre-launch gate.

For regulated industries, this is where AI governance becomes tractable. You cannot write an audit finding against a demo. You can write one against a production system with logged trajectories, versioned evaluation datasets, a documented human review process, and a closed feedback loop from production failures to regression tests. The evaluation infrastructure is the evidence layer. Build it like one.

11. Why This Matters Now

The framing I keep returning to is this: you are not deploying a model. You are deploying a system that makes decisions, often at the point of customer contact, often in domains with regulatory consequence. The evaluation question is not “does the output look reasonable.” It is “can I demonstrate, with evidence, that the system behaves correctly across the distribution of inputs it will encounter, that it uses its tools as intended, that it stays within its safety boundaries, and that I will know when it stops doing any of those things.”

Bag of words gave us counting. Semantic similarity gave us meaning. LLM-as-a-judge gave us scalable assessment. RAG evaluation gave us grounding. Trajectory and tool evaluation give us process visibility. Safety evaluation gives us compliance. Continuous assurance with a closed feedback loop gives us governance.

Each level is a capability increase. But the jump from Level 2 to Level 4 is not incremental. It is a shift from evaluating outputs to evaluating behaviour. And the jump from Level 4 to Level 5 is a shift from evaluating behaviour to governing it. For any organisation running agents in production, both shifts are not optional. They are the foundation on which trust in these systems has to be built.

References

- MT-Bench and Chatbot Arena: LLM Evaluation at Scale (Zheng et al., 2023)

- When AIs Judge AIs: The Rise of Agent-as-a-Judge (arxiv, 2025)

- Gaming the Judge: Unfaithful Chain-of-Thought Can Undermine Agent Evaluation (arxiv)

- LLM-as-a-Judge: How to Build Reliable, Scalable Evaluation (Comet)

- LLM Evaluation in 2025: Metrics, RAG, and Best Practices

- Bag of Words Model in NLP (IBM)

- Lexical vs Semantic Similarity in NLP and LLMs

- Semantic Textual Similarity Metric Guide (Galileo)

- From Counting Words to Understanding Meaning: The Evolution of NLP

- Evaluate Gen AI Agents: Trajectory Evaluation (Google Cloud Vertex AI)

- LangChain AgentEvals: Trajectory Evaluation Docs

- agentevals GitHub (LangChain)

- LLM Evaluation Framework: Trajectories vs Outputs (LangChain)

- What is AI Agent Evaluation? (Databricks)

- Demystifying Evals for AI Agents (Anthropic Engineering)

- Exploring How AI Agent Trajectories Guide Agent Evaluation (Objectways)

- How to Train and Evaluate AI Agents and Trajectories (Labelbox)

- Top Agent Evaluation Tools in 2025 (Maxim AI)

Andrew Baker is Group CIO at Capitec Bank and publishes technology leadership writing at andrewbaker.ninja.