The 10 Nil Paradigm: Why Your 8 Hour Outage Isn’t a “Blip”

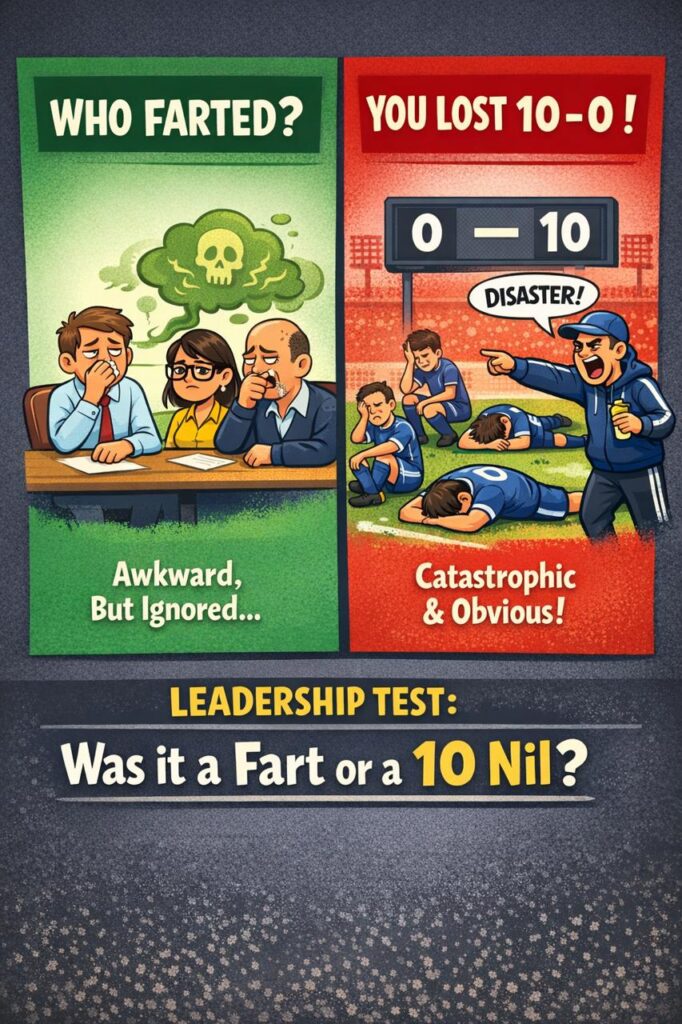

1. Who farted?

If someone farts in a meeting room, everyone notices, even if nobody wants to acknowledge it directly, because the discomfort is immediate, shared, and impossible to ignore. People shift in their seats, avoid eye contact, maybe make a joke, and then, just as quickly, the moment passes and the meeting continues as if nothing material has happened.

Nobody writes a postmortem. Nobody escalates it. Nobody questions whether the organisation is fundamentally broken, because a fart is a localised failure that is socially painful but operationally irrelevant.

Now compare that to losing 10 nil.

If your football team loses 10 nil, there is no ambiguity, no narrative to soften the blow, and no version of events where you were “almost there,” because you were comprehensively and systemically outplayed in a way that is visible to everyone.

That is not awkward. That is catastrophic.

Most organisations behave as if they cannot tell the difference.

2. The model nobody names

A fart is a contained incident, whereas a 10 nil loss is systemic failure that changes how people see you, whether you like it or not.

A fart is uncomfortable but survivable, it does not change trust, it does not change behaviour, and it does not require structural change. A 10 nil loss, on the other hand, destroys trust instantly, exposes systemic weakness, cannot be reframed into something smaller, and demands change whether you are ready for it or not.

Most organisations take events that are clearly 10 nil losses and quietly downgrade them into something that feels more like a fart, because that version is easier to live with.

That is the problem.

3. Your 8 hour outage is not a fart

If your platform is down for 8 hours, it is not a blip, not bad luck, and not “one of those things that happens in complex systems,” because what your clients experience is not your internal narrative but the complete absence of service.

From their perspective, you are unreliable, you are not in control, you will fail again, and you may not even be telling them the full truth, which means that trust does not degrade gradually in these moments but collapses in a way that is both immediate and expensive to recover from.

You did not get it slightly wrong.

You lost 10 nil.

4. Why organisations downgrade 10 nil to a fart

The real failure is not the outage itself but the story that gets told afterwards, because that story determines whether anything meaningful will change.

Organisations rewrite reality because they are biased towards the people they sit next to, the effort they can see, and the narratives that protect them from owning uncomfortable truths. Proximity bias means leaders spend time with engineers and understand their effort, but have no direct exposure to clients who could not transact. Effort bias replaces outcomes with intent, allowing “they tried their best” to become an acceptable conclusion. Narrative control softens the language into “partial outage” or “degradation,” which avoids saying what actually happened. Self preservation ensures that if something truly was a 10 nil loss, it is reframed into something smaller so that leadership does not have to own it.

This is how systemic failure becomes socially manageable, even when it is operationally unacceptable.

5. The 10 Nil Test

After every incident, there are a small number of questions that cut through all internal narratives and force clarity, because they shift the perspective from inside the organisation to the outside world where trust is actually decided.

Would a client describe this as catastrophic, did trust materially reduce, would this trend publicly if it were fully visible, and would we accept this level of failure from a vendor we depend on are the only questions that matter, because if any of them are answered with yes, then the conclusion is unavoidable.

You did not have a bad day.

You lost 10 nil.

6. Engineering comfort vs UX reality

UX teams are conditioned to operate in an environment where failure is visible, immediate, and often public, which means that when they get something wrong, clients respond directly and brutally, forcing teams to confront the gap between what they built and what users actually experience.

Engineering teams, by contrast, can operate behind layers of abstraction where architecture decisions are difficult for non specialists to interrogate, failures are explained internally in softened language, and the feedback loop is filtered before it reaches the people responsible for building the system.

Over time, this creates a dangerous asymmetry where UX is shaped by truth and discomfort, while engineering can drift into a version of reality where an 8 hour outage every couple of years starts to sound acceptable, not because it is acceptable, but because the full impact is never directly experienced.

Engineering organisations without unfiltered client exposure will always drift towards self deception.

7. The standard you don’t want to defend

The idea that outages lasting hours are inevitable is not a law of nature but a reflection of design decisions that were made long before the incident occurred, because modern systems provide all the necessary mechanisms to fail quickly, recover quickly, and contain damage before it spreads.

Rapid failover, rollback, feature flags, observability, and blast radius control are not advanced concepts anymore, they are baseline capabilities, which means that extended outages are not evidence of complexity but evidence that the system was designed in a way that allows slow failure and slow recovery.

If your system is unavailable, recovery should happen within minutes, not hours, and that expectation should tighten over time rather than being relaxed.

Long outages are not operational events.

They are design failures being revealed.

8. Effort is not the metric

It is entirely possible that your teams worked incredibly hard during the outage, sacrificed personal time, and did everything they could within the constraints of the system, but none of that effort is visible to the client who could not access their money or complete their transaction.

Markets do not reward effort, they respond to outcomes, and when leadership starts to substitute effort for outcome, it creates a culture where failure can be justified indefinitely because there is always a reason why things were difficult.

That is how organisations get stuck.

9. Leadership and uncomfortable truth

Leadership is not the act of protecting people from uncomfortable realities but the act of making those realities visible and unavoidable, because without that clarity there is no foundation for meaningful change.

If you cannot say that you lost 10 nil, you cannot fix the system that allowed it to happen, and if you cannot clearly describe how you will win next time, you will gradually redefine losing until it feels acceptable and no longer demands action.

This is where many organisations settle, not because they lack intelligence or capability, but because they lack the willingness to confront the gap between their current state and the standard they claim to operate at.

10. Outages are not bad luck. They are negative capabilities

When something catastrophic happens, there is a natural tendency to attribute it to bad luck or an unfortunate combination of events, because that framing reduces the sense of control and therefore the sense of responsibility.

But systems do not behave randomly in that way.

If your platform goes down for 8 hours, it is not an unlucky sequence of events but a capability that already existed within the system, waiting to be triggered under the right conditions, because the system was designed in a way that made that outcome possible.

Outages are negative capabilities exploited by chance.

They reveal that you had the ability to fail over slowly, the ability to recover manually, the ability to allow a small issue to cascade, and the ability to operate without sufficient containment, all of which were present long before the incident occurred.

Most systems are not designed to succeed under stress, they are designed to fail in ways that feel acceptable to the organisation that built them, which is why the language used afterwards matters so much.

Describing an outage as a perfect storm or bad timing assumes low control, and low control is convenient because it removes accountability, but it is also fundamentally incorrect.

If a system can fail in a particular way, it eventually will, which means that the only meaningful question is not what went wrong, but why that failure mode was allowed to exist in the first place.

High performing organisations assume that every weakness will be exposed, every failure mode will be triggered, and every outage is simply a design decision revealed under pressure.

Once you accept that losing 10 nil is a capability rather than an accident, the conversation shifts from explanation to redesign.

11. You built a system that can lose 10 nil

Teams do not lose 10 nil because they are unlucky, they lose 10 nil because they built systems that allow that outcome to occur, and leadership allowed those systems to persist without forcing the necessary changes.

If your platform can be down for 8 hours, you did not have a bad incident, you built a system that can fail catastrophically and then normalised that failure by describing it in softer terms.

Calling it a fart does not change what it is, it only makes it easier to sit in the room afterwards while nothing meaningful improves.