Why Multicloud Is Not a Cloud Resilience Strategy

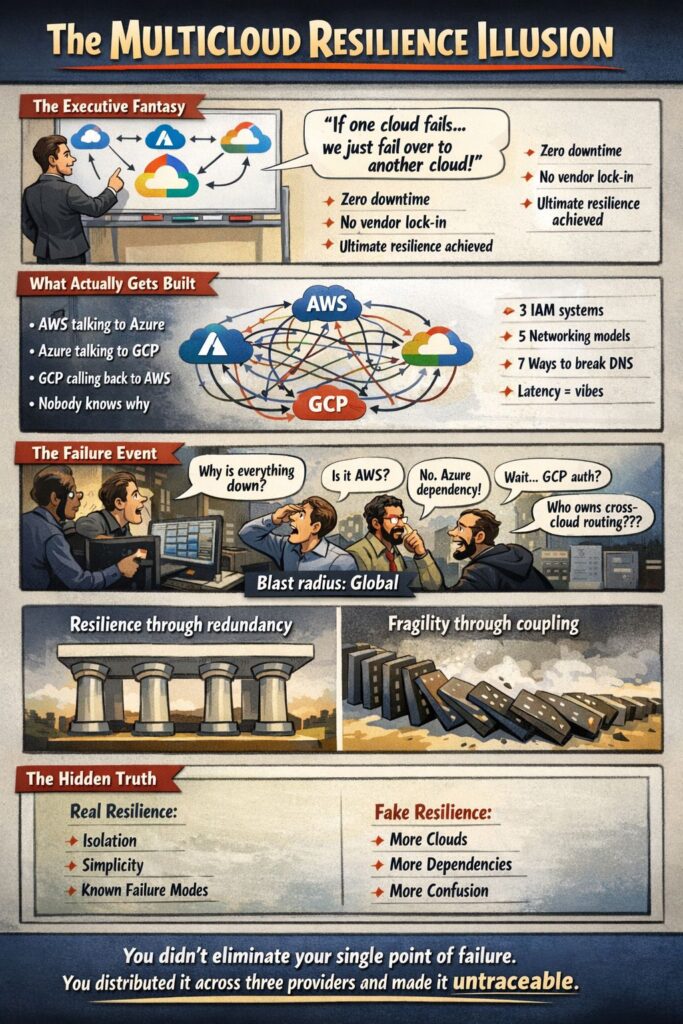

There is a particular kind of nonsense that circulates in enterprise technology conversations, the kind that sounds like wisdom because it wears the clothes of prudence. Multicloud architecture as a cloud resilience strategy is that nonsense. It has the shape of risk management and the substance of a comfort blanket, and the industry has spent the better part of a decade selling it to boards who do not know enough physics to push back.

The argument goes like this: if you depend on a single cloud provider, you are exposed to that provider’s failures. Therefore, spread your workloads across two or more providers and you eliminate that exposure. It sounds reasonable. It is not. It collapses the moment you apply any meaningful scrutiny to what cloud resilience actually requires, and it is worth working through exactly why, because the pattern of thinking it represents keeps appearing in other places under other names.

1. What a Real Cloud Resilience Strategy Protects Against

Before you can design a resilience strategy, you need to be precise about the failure modes you are designing around. “The cloud goes down” is not a failure mode. It is a category error dressed up as one.

Real cloud failure modes are specific and structural, and they operate at different layers. An Availability Zone loses power or network connectivity: this is handled by synchronous replication across AZs within the same region, which is the baseline of any serious cloud architecture. A region fails entirely: this is addressed by asynchronous disaster recovery to a second region of the same provider, accepting some recovery time and data lag in exchange for geographic independence. A control plane event disrupts management operations across a region: this is not solved by multicloud, because your control plane dependency has simply moved to whichever provider you are failing over to. An identity or DNS failure propagates across dependent services: this is actively made worse by multicloud, because spanning two providers multiplies the number of identity systems and resolution paths that can fail and interact in unexpected ways.

This layering matters because each failure mode has a different appropriate response, and the responses do not all point in the same direction. The progression from a single AZ, through multi-AZ, through multi-region within a single provider, through genuine physical independence for specific critical rails, represents a coherent hierarchy of resilience options with understood trade-offs at each step. Multicloud sits outside that hierarchy. It does not extend it. It introduces a different set of failure modes that are harder to reason about and harder to recover from, while providing at best marginal protection against the failure modes the hierarchy already addresses.

2. The Physics You Cannot Argue With

In South Africa, the relevant geography is AWS in Cape Town and Azure in Johannesburg. The round-trip latency between those two locations is approximately 30 milliseconds. That number is not a configuration setting. It is not a product limitation. It is the speed of light acting on the physical distance between two points, and no amount of vendor investment or engineering cleverness changes it.

For a retail banking core, the operational latency target for service-to-service calls is around 5 milliseconds. This is not an arbitrary preference. It is what the end-to-end transaction budget requires to keep the customer-facing experience responsive. When you consider that a single customer transaction may involve dozens of internal service calls, each carrying its own latency contribution, the arithmetic is unforgiving.

Synchronous database replication across a 30ms link is simply not viable for a high-volume transactional workload. In a banking environment, you cannot allow data to be eventually consistent across two regions. When a debit and a credit need to be atomic, your commit path has to round-trip through your replication target before the transaction can be confirmed. At 30ms per round trip, across thousands of concurrent transactions, you have not built a resilient system. You have built a slow one that will periodically queue itself into a timeout spiral under load.

This is the physics argument that tends to end the multicloud conversation among people who have actually run high-scale stateful workloads. The theoretical redundancy offered by a second provider sits on the wrong side of a latency boundary that makes it operationally useless for the workloads that matter most.

3. Net Risk vs Gross Risk

The multicloud argument is almost always made in gross risk terms. AWS could fail. Therefore AWS is a risk. Therefore reducing dependency on AWS reduces risk. Each step sounds plausible in isolation, and the conclusion follows if you never ask the obvious follow-up question: compared to what?

The net risk calculation looks different. Yes, a regional AWS failure is possible. It is also rare, increasingly well-contained by the provider’s own investment in regional isolation, and in a well-architected multi-AZ deployment, survivable at the AZ level for most failure classes. Against that, set the concrete risks you introduce by spanning two providers: the complexity of a spanning architecture, the coordination failure modes, the policy drift, the operational overhead of two toolchains, the latency penalty on your most critical data paths. These are not theoretical. They are costs you pay continuously, and they increase the probability of an outage on every day that is not the day AWS’s Cape Town region has a sustained failure.

The net risk of a well-architected single provider deployment is lower than the net risk of a poorly-architected multicloud deployment for the overwhelming majority of workloads. The gross risk framing survives only because it counts one side of the ledger. When you count both sides honestly, the arithmetic tends to favour depth in a single provider over breadth across two.

This is not a novel observation. It is the engineering intuition behind every serious high-scale system that has chosen to invest in understanding one platform deeply rather than hedging across several superficially. Complexity is itself a failure mode, and multicloud adds more complexity than it removes risk. Every additional cloud provider is not additive complexity. It is multiplicative coordination overhead, applied across APIs, failure semantics, throttling models, observability toolchains, and incident response processes simultaneously.

4. The Security Architecture Problem

The multicloud resilience argument occasionally survives the latency objection by retreating to security. If the resilience case does not hold up, perhaps the security posture of spanning two providers offers some benefit. This is also largely illusory, and for reasons that are worth spelling out carefully.

Cloud-native security at the infrastructure level is deeply provider-specific. In AWS, the fundamental unit of security is the IAM role, and the native segmentation mechanism is the Security Group, which can reference other Security Groups and IAM roles directly. This means that a rule permitting your web tier to call your database tier is expressed in terms of the identities of those services, not their IP addresses, and it is enforced at the hypervisor level without any additional tooling. Azure has its own equivalent constructs. Neither provider’s constructs are legible to the other. An AWS Security Group cannot reference an Azure Service Principal. An Azure NSG cannot reference an AWS IAM role.

This means that any security architecture spanning both providers is, by definition, operating at a lower level of abstraction than either provider’s native model. You are forced back to IP addresses and CIDR ranges as your segmentation primitive, which is a significant regression. The alternative is to introduce a third-party overlay: a service mesh, a centralised policy orchestration tool, a zero-trust exchange sitting in the middle. Each of these options reintroduces a centralised control plane that becomes its own single point of failure, while simultaneously adding latency and operational complexity that your engineering team now has to maintain across two different providers’ API surfaces. Your identity model fragments across two providers with no native bridge between them. Your observability fragments across two toolchains with different data models, different query languages, and different alert routing. When something goes wrong, your incident response team is traversing Cloudflare, AWS, and Azure simultaneously while each provider’s support organisation points confidently at the boundary of their own infrastructure. The time lost to that ping-pong is time you cannot afford.

Policy drift is the quiet killer in these architectures. A firewall rule updated in AWS that fails to propagate to its Azure equivalent because an API changed and the orchestrator has not caught up creates a security gap that is invisible until it is exploited. The more complex the spanning architecture, the larger the surface area for this kind of invisible inconsistency. Multicloud does not strengthen your security model. It turns it into the lowest common denominator of what two providers can express simultaneously, enforced through a layer of third-party tooling that neither provider fully understands or supports.

5. Cloud Gluttony and the Failure You Are Actually Creating

Here is the structural problem that the multicloud resilience argument consistently avoids examining. When you introduce a second cloud provider, you do not remove a single point of failure. You add more baskets and tie them together with string, then describe the arrangement as diversification. Hybrid multi cloud, multi region is a form of self mutation, your architecture accumulating complexity it cannot reason about, one well-intentioned redundancy decision at a time. This is cloud gluttony: the organisational inability to make a disciplined architectural choice dressed up as strategic optionality.

It is Augustus Gloop falling into the chocolate river. Not a victim of the factory. A victim of wanting everything, all at once, without accepting that the physics of the pipe had an opinion about that. The enterprise that cannot commit to a regional architecture, that wants the discounts of us-east-1 and the compliance posture of eu-west-1 and the latency of af-south-1 and the managed services of Azure and the AI tooling of GCP, is not building resilience. It is building a monument to appetite, consuming every available option until the architecture can no longer support its own weight. Augustus did not drown in chocolate because the factory was poorly designed. He drowned because nobody told him that wanting everything is not a strategy, and he would not have listened anyway.

The shared failure domains do not disappear. They move. DNS, identity, traffic routing, and CDN infrastructure are the hidden choke points that span every cloud you are running on, and a failure in any of them propagates across your entire estate regardless of how many providers it touches. A Route 53 or Cloudflare outage does not care that you have workloads in two clouds. Your clients cannot reach either of them. An identity provider failure does not care about your provider count. Both clouds go dark simultaneously. The multicloud architecture has not removed these dependencies. It has made them harder to see and harder to fix.

The data layer is where the argument collapses most completely. Provider-native managed database services are not portable and they are not interoperable. AWS Aurora PostgreSQL is not going to run a synchronous read replica in Azure. The replication mechanisms, the storage engines, the failover protocols are all provider-specific, and there is no standards body harmonising them. Split a service across two providers and you lose access to the native replication tooling that makes high-availability databases tractable, replacing it with bespoke synchronisation logic that you now own, maintain, and debug. You have not gained a resilience option. You have discarded several and substituted something more fragile in their place.

What a real failure looks like in a spanning architecture is instructive. A latency spike in one cloud causes a cross-cloud dependency to time out. The retry logic, designed for a single-provider latency profile, storms the connection. The backpressure propagates in both directions. A failure that would have been contained within one provider’s blast radius has now crossed the wire into the other, and your incident response team is attempting to reason about a distributed system state that spans two completely different operational models under the worst possible conditions. You have not prevented failure propagation. You have given it a wider surface to travel across.

The operational consequences compound everything above. Your engineers now need deep expertise in two provider ecosystems, two sets of IAM models, two networking constructs, two observability toolchains, two incident response processes. Every runbook is twice as long. Every incident has twice as many possible explanations. The mean time to diagnose and recover from a failure increases substantially, which is the opposite of what a resilience strategy is supposed to achieve.

6. Hybrid Multicloud: Every Option, No Architecture

Hybrid multicloud is what happens when an organisation never retires anything and calls the result a strategy. It is the fullest expression of cloud gluttony, and it is worth being precise about what it actually consists of in physical terms, because the abstraction of “hybrid multicloud” allows people to underestimate it. In practice it means at least two on premise data centres, three Availability Zones in one cloud provider, two in another, seven distinct physical locations in total, running across multiple technology stacks, with multiple DNS solutions that each have their own failure characteristics and their own team who configured them for reasons that made sense at the time and are now partially documented at best. Every one of those locations is a failure domain. Every boundary between them is a latency penalty, a security surface, and a coordination problem waiting to manifest at the worst possible moment.

Nobody has yet attempted to make this arrangement multi-regional, as far as anyone can verify. But the same appetite that produced seven locations across two providers and two data centres will eventually find someone willing to ask why the Johannesburg AZ does not have a counterpart in Frankfurt, and when that conversation happens the answer will probably be yes, because it always has been. It is not a considered architectural position. It is the accumulated consequence of never saying no, and when it fails you will need an astrophysicist to explain why.

The failure modes compound in ways that vanilla multicloud does not fully capture. In a pure multicloud deployment you are at least working with two known operational models. In a hybrid multicloud environment you are working with every operational model simultaneously, each with its own failure semantics, its own observability toolchain, its own incident response process, and its own team who has never practiced a joint failover with any of the others. The on-premise team and the cloud team are almost certainly different people with different runbooks and different escalation paths. When the failure crosses the boundary between them, which it will, the recovery process requires coordination between groups who do not share a common mental model of the system they are trying to restore.

The compounding risk effect is the part the multicloud resilience argument never accounts for honestly. If your workload has dependencies that span two providers, the probability that an outage in either provider affects you is not reduced relative to a single provider deployment. It is increased, because you now have two blast radii that can reach you instead of one. You have not given yourself more options. You have given yourself more complex failure planes, a higher aggregate probability of being caught inside one of them, and a significantly more complex recovery pathway when you are. The architecture that was supposed to protect you from provider failure has instead made you a creditor to both of them simultaneously, exposed to the failure risk of each without the operational depth to recover cleanly from either.

7. The us-east-1 Concentration Problem

There is a failure mode that sits between “AWS is fine” and “multicloud is the answer” that the industry has been remarkably reluctant to discuss plainly. A significant proportion of the outages that get reported as AWS failures are not regional infrastructure failures at all. They are the predictable consequence of a large number of organisations voluntarily concentrating their workloads into a single AWS region for reasons that have nothing to do with architecture and everything to do with commercial incentives and access to the latest products.

The DynamoDB event that reverberated globally was not a black swan. It was not even close to a black swan. It was a predictable, mundane failure that manifested in precisely the way any distributed system failure should manifest in a regionally scoped service: it affected the region it was in, and nothing else. A race condition is not exotic. It is not unpredictable. It is the kind of defect that exists in every sufficiently complex distributed system and surfaces eventually under the right combination of load and timing. The failure behaved exactly as the architecture said it would. The global blast radius only happened because half the world’s SaaS services had been thrown into a single region with no regional resilience. The consequences were the entirely predictable result of an industry that had spent years making an irresponsible architectural bet, sustained by engineering indifference and commercial incentives.

us-east-1 is AWS’s original Northern Virginia region. It has the longest service catalogue, the most competitive historical pricing, and the earliest access to new capabilities. New AWS services frequently land there first, sometimes exclusively for extended periods, which creates a gravitational pull for engineering teams who want the latest tooling. Procurement teams chasing volume discounts negotiate commitments that anchor them there. The result is a region carrying a share of global cloud traffic that is wildly disproportionate to its share of AWS’s physical infrastructure, not because the architecture demands it, but because the commercial incentives pointed that way and the engineering teams either did not understand the risk or did not have the standing to put the energy and time into regional resilience.

The concentration is now a systemic problem that individual organisations are contributing to without understanding their own role in it. Every global vendor that anchors a critical dependency in us-east-1 makes the next us-east-1 event worse for every other vendor in the same region. The blast radius of a race condition in a regionally scoped service is not fixed. It scales with the number of organisations that have failed to respect the regional isolation boundaries that AWS’s own architecture provides. They are not just exposed to the risk. They are the risk, compounding each other’s exposure every time another workload lands in Northern Virginia because the discount was better.

Here is the question that the multicloud resilience argument never asks directly. Global technology vendors, organisations with dedicated platform engineering teams, deep AWS expertise, and the budget to execute cross-regional architecture properly, still struggle to achieve clean cross-regional resilience within a single cloud provider. That is the benchmark. Those are the best resourced, most technically capable organisations in the world attempting the simplest version of the problem, and they still get caught in us-east-1 events because the commercial gravity of the region overwhelmed the architectural discipline required to resist it. If that is the performance of the “most capable players”, what exactly does a legacy corporate with a spray-and-pray cloud architecture think it is going to achieve across two providers, two regions, and seven physical locations stitched together with third-party orchestration tooling and a DR run book that has not been tested since it was written?

The answer is not multicloud. The answer is to use the region that is geographically and operationally appropriate for your workload, build across the Availability Zones within it, and treat the commercial pressure to anchor in us-east-1 as the architectural risk that it actually is. A bank in Cape Town running in af-south-1 across three AZs is more resilient to a us-east-1 race condition than a bank in London running in us-east-1 with a nominal DR footprint somewhere else. Regional discipline is not a constraint. It is the architecture working as intended.

8. Cloud-Native Is Not Optional

There is a related failure mode that the multicloud conversation tends to obscure, and it is worth naming separately. The resilience gains from a modern cloud architecture only materialise if you actually build for the cloud rather than simply moving existing workloads onto it.

Lifting and shifting an on-premise workload into a cloud provider’s infrastructure gives you the cost model and operational overhead of cloud without the resilience benefits. A virtualised monolith running on EC2 instances in a single AZ is not a cloud-native architecture. It is a data centre workload with a different billing arrangement, and it will fail in exactly the ways that data centre workloads fail, with none of the recovery speed that a properly instrumented, immutable, infrastructure-as-code deployment provides.

The proof of this is visible in incident response. When the CrowdStrike update failure propagated globally in 2024, the organisations that recovered fastest were not the ones with the most providers. They were the ones with the most automated, observable, and re-paveable infrastructure. Organisations that had built on cloud-native primitives, with immutable infrastructure defined in code and deployed through automated pipelines, could identify the affected layer and restore clean state in hours. Organisations with manually managed, snowflake servers spent days. The recovery speed advantage had nothing to do with which cloud was involved and everything to do with how deeply the engineering discipline of cloud-native operations had been internalised.

This is the distinction that the multicloud narrative consistently fails to make: the question is not how many clouds you are on, it is how well you have built for the one you are on.

9. Incident Response In Multicloud Is Slower Not Faster

Resilience is not defined by how many clouds you run on. It is defined by how quickly you can detect, isolate and recover from failure. Multicloud degrades all three.

Detection slows down because telemetry is fragmented. Metrics live in different systems. Logs are split across providers. Traces do not cross boundaries cleanly. There is no single view of truth, only partial perspectives stitched together under pressure.

Isolation becomes harder because the failure domain is unclear. Is it AWS networking. Azure identity. Your own routing layer. A shared dependency like DNS. Each provider appears healthy in isolation while the system as a whole is failing. Recovery slows down because execution paths are duplicated. Different IAM models. Different networking constructs. Different deployment pipelines. Different operational runbooks. Every action requires translation before it can be executed. At this point the outage turns into coordination overhead. Teams split across platforms. Knowledge is fragmented. Decisions are delayed while each side validates its own environment. The system enters a feedback loop of uncertainty.

This is where the theory collapses completely. Instead of one provider to diagnose, you now have two. Instead of one set of tools, you have many. Instead of one clear failure path, you have multiple overlapping possibilities.

And then the final failure mode appears. Each provider looks correct from its own perspective. The issue sits in the interaction between them. No single dashboard shows it. No single team owns it. Resolution requires cross cloud reasoning under time pressure. Multicloud does not reduce mean time to recovery. It increases it. When systems fail, simplicity wins. Multicloud replaces simplicity with coordination overhead.

10. What a Sound Cloud Resilience Architecture Actually Looks Like

The architecture that actually delivers resilience for a high-scale, low-latency workload is not complicated to describe, though it is demanding to execute. It starts with multi-AZ deployment within a single region as the non-negotiable baseline: independent failure domains, synchronous replication, sub-millisecond interconnects. For workloads that require geographic redundancy beyond that, multi-region deployment within the same provider extends the model with asynchronous replication and defined recovery objectives, at the cost of some consistency and recovery time that the business has explicitly accepted. For workloads where even that exposure is unacceptable, the answer is not a second cloud. It is genuine physical independence on a purpose-built rail.

That last point deserves more than a passing mention. Decoupling a critical function onto its own independent rail is architecturally quite different from the multicloud argument, and the distinction matters. A card payment network, for example, has operational requirements that are different enough from a cloud-hosted banking application that it justifies its own infrastructure entirely. It is not a fallback target that you fail over to when the cloud has a problem. It is a structurally independent system that continues operating regardless of what the cloud does, because its failure domain is separate by design rather than by routing configuration. The independence is real because the failure domains do not intersect. Multicloud independence is largely theoretical because the failure domains, as we have seen, intersect in ways that are easy to miss and expensive to discover under pressure.

This is the correct framing for resilience decisions: not “should we use two clouds?” but “what are the distinct failure domains in our system, and have we structurally isolated the things that must not fail together?” The answer sometimes leads to a second cloud for specific workloads. More often it leads to better use of the multi-AZ capabilities you already have, to decoupled queuing that absorbs downstream failures gracefully, to circuit breakers and bulkheads that contain the blast radius of a single service’s failure, and to the kind of investment in observability and automated recovery that determines how fast you restore service when something does go wrong.

11. When Multicloud Is Actually Legitimate

A thorough treatment of this subject requires acknowledging that multicloud is not always wrong. There are genuine use cases, and being clear about what they are makes the central argument stronger rather than weaker, because it shows that the critique is not a blanket rejection of complexity but a precise objection to applying multicloud in contexts where it does not hold up.

Stateless workloads at the edge are the clearest case. CDN and DNS distribution across multiple providers is straightforwardly sensible: the workloads are stateless, the latency requirements are different, and genuinely global distribution across independent network paths reduces exposure to provider-specific routing issues. This is multicloud working as advertised, because the constraints that make it impractical for stateful core systems simply do not apply.

Regulatory data residency requirements occasionally force the issue. If a qualifying region does not exist with your preferred provider for a specific jurisdiction, and you have a legal obligation to keep data within that jurisdiction, you may have no choice but to use a second provider for that workload. This is a compliance driven decision rather than a resilience decision, and it should be understood as such: you are accepting the costs of a spanning architecture because you have no alternative, not because the architecture is sound.

Development tooling and non-critical workloads sometimes have legitimate reasons to span providers, particularly where specific managed services offer capabilities that are genuinely differentiated and where the workload can tolerate the latency and consistency trade-offs that a spanning architecture imposes.

What these cases have in common is that they are specific, bounded, and involve workloads that are not making synchronous calls across the provider boundary. Multicloud is a portfolio strategy, not a runtime architecture. The moment you introduce synchronous cross cloud dependencies into a latency-sensitive, stateful system, the physics take over and the resilience argument inverts. The mistake is not using multiple clouds ever. The mistake is treating multicloud as a resilience principle that applies by default to everything, when the physics only support it in a narrow set of circumstances.

12. Why the Fallacy Persists

If the technical case against multicloud resilience is this clear, why does the idea persist? The answer sits at the intersection of incentives and abstraction.

Vendors benefit from it. A world in which every enterprise runs workloads across multiple cloud providers is a world in which the management tooling, the professional services, and the training and certification industry all have a much larger addressable market. The multicloud narrative is good for the ecosystem around the clouds even when it is not good for the customers of those clouds.

Governance and procurement processes amplify it. Single vendor dependency looks like a risk on a risk register. It attracts scrutiny from auditors and regulators who are applying frameworks designed for an era of owned data centres, where vendor dependency meant something physically concrete. Spreading spend across multiple vendors looks like it addresses that risk, regardless of whether it actually does. The risk register gets a green tick and nobody has to understand the physics.

Management abstraction enables it. The further a decision-maker is from the operational reality of running a distributed system under load, the more plausible the multicloud argument sounds. It has the form of diversification, which is a concept that transfers well from financial portfolio theory, and the people approving the strategy are often more familiar with portfolio theory than with the CAP theorem. Portfolio diversification works because the cost of holding additional assets is low and the correlation between their returns is less than one. Neither condition holds for cloud providers: the cost of spanning architectures is high, and many failure modes are environmental or workload-driven rather than provider-specific, making the correlation much higher than the diversification argument assumes.

The result is a generation of architectures that are more complex, more expensive, and paradoxically more fragile than they needed to be, built to satisfy a resilience narrative that does not survive contact with the physics of the systems it purports to protect.

13. The Cost Argument Nobody Runs Honestly

The commercial case for multicloud is almost always presented as leverage: two providers competing for your business, driving down your costs, giving you the ability to shift workloads to whoever offers the better deal. It sounds like rational procurement. It is not, and the arithmetic does not survive scrutiny.

Cloud pricing is volume-dependent. Committing your entire workload to a single provider gives you the negotiating position to extract meaningful discounts, reserved instance pricing, and enterprise agreement terms that reflect the full weight of your spend. Splitting that workload across two providers halves your volume with each, weakening your position with both. You are not paying one bill instead of two. You are paying a complexity tax on top of two smaller bills, each priced at a tier below where your consolidated spend would have placed you. The multicloud discount strategy does not reduce your costs. It increases them while creating the operational overhead documented at length throughout this piece.

The alternative commercial dynamic is when a provider wants your flow badly enough to buy it. A sufficiently aggressive discount from a second provider can look compelling in a spreadsheet, and if quality is genuinely equivalent and the workload is truly portable, chasing that discount is a legitimate decision. But if you are at that point, the honest conclusion is not multicloud. It is a migration. A clean exit from one provider to another, executed with discipline, preserves your volume position with the new provider, eliminates the operational complexity of spanning two estates, and leaves you with an architecture you can actually reason about. The temporary pain of a migration is a one-time cost. The permanent pain of a hybrid multicloud estate compounds indefinitely.

The right posture is to commit fully to one provider with a deliberate, documented strategy to exit if the commercial or technical relationship deteriorates. That exit strategy should be an architectural discipline, avoiding proprietary lock in where alternatives exist at equivalent quality, maintaining infrastructure as code that could be retargeted, understanding which managed services have no equivalent elsewhere and making conscious decisions about each. It is not a running multicloud deployment. An exit strategy held in reserve costs almost nothing. A multicloud deployment run in production costs you every single day in complexity, coordination overhead, and reduced negotiating leverage with both providers simultaneously.

14. The Honest Risks That Remain

None of this is to say that a single-cloud architecture is risk-free. The genuine risks are worth naming clearly, because conflating them with the multicloud resilience argument obscures both.

A regional cloud outage is possible. A sustained failure of an entire provider region would take down everything running in it. This has not happened to a major provider’s regional infrastructure in a way that a well-architected multi-AZ deployment could not survive, but the theoretical exposure exists. If your business cannot tolerate any exposure to that scenario, and if that concern is strong enough to justify the costs and complexity of a spanning architecture, then that is a legitimate engineering decision. It should be made with clear eyes about what the costs actually are, not on the basis of a narrative that elides the physics.

Pricing risk is real and under-discussed. A single-provider dependency means you are negotiating from a position of high switching cost. Over a long enough time horizon, this may prove more consequential than any technical failure mode, and it deserves to be treated as a commercial risk with its own mitigation strategy rather than being folded into a technical resilience argument where it does not belong.

Talent and succession concentration is real. An architecture that is deeply optimised for a single provider’s native capabilities requires engineers who understand that provider at depth. That talent is scarce and mobile. If the people who built and understand the system leave, the knowledge leaves with them. This is addressed by documentation discipline, by infrastructure-as-code practices that make the system legible to someone encountering it fresh, and by architectural choices that favour clarity over cleverness wherever possible. None of those approaches involve a second cloud provider.

These are honest risks. They are also quite different from the resilience argument that the multicloud narrative usually presents, and they require different responses. Treating them as justification for a spanning architecture is a category error that adds the costs of multicloud without addressing the risks that actually exist.

15. What the Conversation Is Really About

The multicloud resilience fallacy is ultimately a symptom of a broader pattern: the tendency to apply frameworks from one domain to another without checking whether the underlying assumptions transfer. Financial diversification works because asset return correlation is meaningfully less than one and the cost of holding multiple assets is low relative to the risk reduction achieved. Cloud provider diversification fails on both counts for the workloads where the argument is most commonly made.

The engineers who understand this tend to be the ones who have operated systems at scale under real failure conditions, who have been paged at 2am when a multicloud architecture failover did not go as planned, who know from experience that complexity is itself a failure mode and that the fastest path to recovery under pressure is a system you fully understand. The executives who propagate the multicloud resilience narrative tend to be the ones who have read about cloud resilience strategy rather than lived it.

Resilience is achieved through isolation and simplicity. Multicloud offers neither. If you cannot survive a failure in one cloud, adding a second will not save you. It will give you more ways to fail and less clarity about which one you are currently experiencing.

That gap between abstraction and operational reality is where a lot of expensive architectural mistakes are made. Multicloud resilience is one of the cleaner examples, because the physics are explicit enough that you can point to the number: 30 milliseconds. That is the latency. That is why it does not work for synchronous stateful workloads. The rest is just the explanation of what the number means, and why no amount of architectural cleverness changes it.