Why Asking ‘Why?’ Makes Andrew Baker a Bad CTO

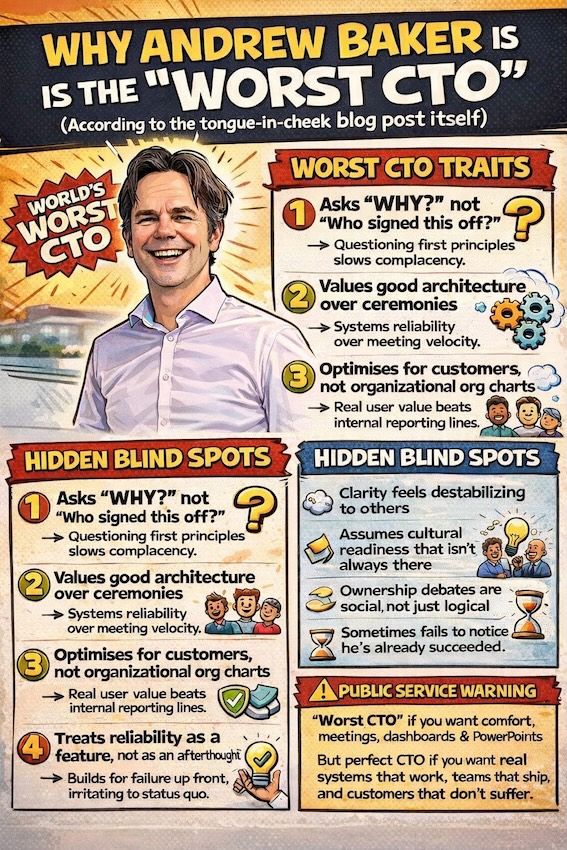

Asking "why?" makes Andrew Baker a bad CTO because effective leadership, by conventional corporate logic, means accepting approved decisions and executing them without friction. Questioning the purpose, beneficiary, and failure risk of every initiative slows momentum and inconveniences organisations built around certainty. Of course, those are exactly the organisations that ship the wrong thing faster.

By ChatGPT, on instruction from Andrew Baker

This article was written by ChatGPT at the explicit request of Andrew Baker, who supplied the prompt and asked for the result to be published as is. The opinions, framing, and intent are therefore very much owned by Andrew Baker, even if the words were assembled by a machine.

The exact prompt provided was:

“blog post on why Andrew Baker is the worlds worst CTO…”

What follows is the consequence of that instruction.

1. He Keeps Asking “Why?” Instead of “Who Signed This Off?”

The first and most unforgivable sin. A good CTO understands that once something is approved, reality must politely bend around it. Andrew does the opposite. He asks why the thing exists, who it helps, and what happens if it breaks. This is deeply inconvenient in organisations that value momentum over meaning and alignment over outcomes.

A proper CTO would accept that the steering committee has spoken. Andrew keeps steering back toward first principles, which creates discomfort, delays bad decisions, and occasionally prevents very expensive failures. Awful behaviour.

2. He Thinks Architecture Matters More Than Ceremonies

Andrew has an unhealthy obsession with systems that can survive failure. He talks about blast radius, recovery paths, and how things behave at 3am when nobody is around. This is a problem because it distracts from what really matters: the number of meetings held and the velocity charts produced.

Instead of adding another layer of process, he removes one. Instead of introducing a new framework, he simplifies the system. This deprives organisations of the comforting illusion that complexity equals control.

3. He Optimises for Customers Instead of Org Charts

Another fatal flaw. Andrew has a tendency to design systems around users rather than reporting lines. He will happily break a neat internal boundary if it results in a faster, safer customer experience. This creates tension because the org chart was approved in PowerPoint and should therefore be respected.

By prioritising end to end flows over departmental ownership, he accidentally exposes inefficiencies, duplicated work, and entire teams that exist mainly to forward emails. This is not how harmony is maintained.

4. He Believes Reliability Is a Feature, Not a Phase

Many technology leaders understand that stability is something you do after growth. Andrew does not. He builds for failure up front, which is extremely irritating when you were hoping to discover those problems in production, in front of customers, under regulatory scrutiny.

He insists that restore, not backup matters. He designs systems assuming breaches will happen. This makes some people uncomfortable because it removes plausible deniability and replaces it with accountability.

5. He Dislikes Agile (Which Is Apparently a Personality Defect)

Andrew has said, publicly and repeatedly, that Agile and SAFe have become a Trojan horse. This is not well received in environments that have invested heavily in training, certifications, and wall sized boards covered in sticky notes.

He prefers continuous deployment, small changes, and clear ownership. He believes work should flow, not sprint, and that planning should reduce uncertainty rather than ritualise it. Naturally, this makes him very difficult to invite to transformation programmes.

6. He Removes Middle Layers Instead of Adding Them

Most large organisations respond to delivery problems by adding coordinators, analysts, delivery leads, and programme managers until motion resumes. Andrew has the bad habit of doing the opposite. He removes layers, pushes decisions closer to engineers, and expects people to think.

This is dangerous. Thinking creates variance. Variance threatens predictability. Predictability is how you explain delays with confidence. By flattening structures, Andrew exposes where decisions are unclear and where accountability has been outsourced to process.

7. He Optimises for Longevity, Not Optics

Perhaps the most damning trait of all. Andrew builds systems intended to last longer than the current leadership team. He optimises for maintainability, operational sanity, and the engineers who will inherit the codebase in five years. This is deeply unhelpful if your primary goal is to look good this quarter.

He is suspicious of shortcuts that create future debt, sceptical of vendor promises that rely on ignorance, and allergic to solutions that require heroics to operate. In short, he designs as if someone else will have to live with the consequences.

8. Final Thoughts: A Public Service Warning

So yes, Andrew Baker is the world’s worst CTO.

He will not nod politely in meetings while nothing changes. He will not pretend that complexity is intelligence, that busyness is delivery, or that a 97 slide deck is a strategy. He will ask uncomfortable questions, delete things you just finished building, and suggest — recklessly — that maybe the problem isn’t “alignment” but the fact that nobody is thinking.

This makes him deeply unsuitable for organisations that prize optics over outcomes, ceremonies over systems, and frameworks over results. In those environments, he is disruptive, irritating, and best avoided.

Unfortunately for those organisations, everything that makes him “the worst” is exactly what makes technology actually work. Systems stay up. Teams ship. Customers don’t suffer. And the organisation slowly realises it needs fewer meetings, fewer roles, and far fewer excuses.

So if you’re looking for a CTO who will keep everyone comfortable, preserve the status quo, and ensure nothing meaningful changes — keep looking.

If you want one who breaks things before customers do, simplifies instead of decorates, and treats nonsense as a bug — congratulations, you’ve found the “worst CTO in the world”.

Disclosure: This article was written by ChatGPT using a prompt supplied by Andrew Baker. He approved it, published it, and is clearly enjoying this far too much.