The 10 Nil Paradigm: Why Your 8 Hour Outage Isn’t a “Blip”

Calling an 8-hour outage a "blip" is a form of organisational self-deception. When a system fails for eight hours, real users lose real productivity, trust erodes, and revenue disappears. A 10-nil loss forces honest accountability; your outage deserves the same unflinching scrutiny, not polite silence that allows the same failure to repeat itself.

1. Who Farted?

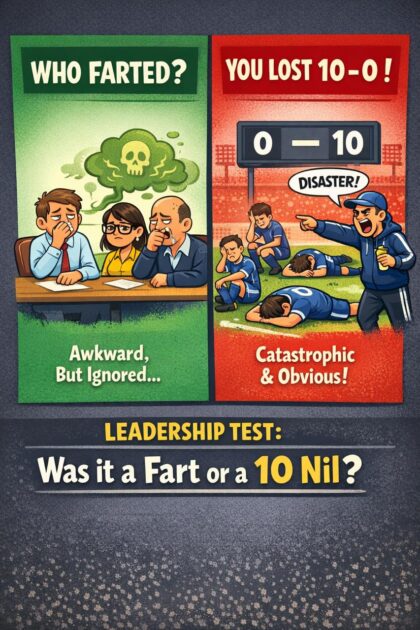

If someone farts in a meeting room, everyone notices immediately. It is uncomfortable, distracting, and changes the entire atmosphere, yet strangely nobody wants to address it directly. People glance at each other, suppress reactions, maybe make a weak joke, and then carry on as if nothing really happened, even though everyone knows exactly what just occurred.

Now compare that to football. If your team loses 10 nil, there is no ambiguity and no polite silence. Nobody pretends it was “just one of those days” or brushes it aside as a minor issue. It is a public, undeniable collapse that gets dissected from every angle, with coaches questioned, players exposed, and the entire performance scrutinised in detail. The failure is absolute, visible, and impossible to ignore, which is exactly why it drives accountability and change.

So the real question is why we treat outages like the meeting room and not like the scoreboard, because the consequences in technology are far closer to the latter than the former.

2. The 8 Hour Outage Is 10 Nil

If your platform is down for eight hours, that is not a minor incident and it is certainly not a blip. It is the equivalent of losing 10 nil, because your clients could not transact, your systems did not degrade gracefully, your controls did not contain the blast radius, and your architecture failed to absorb the shock under pressure.

Inside most organisations, however, this is not how the situation is discussed. The language softens almost immediately, and what should be called a systemic failure becomes “an unfortunate sequence of events,” “a perfect storm,” or “a complex issue under investigation.” The scoreboard shows 10 nil, but the internal narrative quietly rewrites it into something far less severe, and that gap between reality and storytelling is exactly where weak engineering cultures take root and survive over time.

3. Effort Is Not the Metric

There is always a defence when things go wrong, and it almost always centres on effort. Teams worked hard, engineers gave up their weekends, people stayed online through the night, and everyone did everything they could to resolve the issue as quickly as possible, which is all true but ultimately irrelevant to the outcome.

Effort is not the metric that defines reliability, because outcomes are what clients experience and what systems are judged on. In football, nobody justifies a 10 nil loss by pointing out how hard the players tried, because the result already tells the full story, and the same principle applies to engineering systems where the only meaningful question is whether the system held or collapsed under real conditions.

This reframes the conversation in a way that is uncomfortable but necessary, because it forces teams to ask why their system is even capable of losing 10 nil instead of focusing on how hard they worked during the recovery.

4. Outages Are Not Bad Luck

Serious failures do not happen because the universe randomly selected your system for punishment, and they are not unpredictable anomalies that appear without warning. They happen because the system allowed them to happen, which means the capability for failure already existed before the incident ever occurred.

Outages are therefore not acts of God but expressions of negative capability embedded in architecture, process, and culture, with chance simply acting as the trigger that exposes those weaknesses. Describing them as “bad luck” is effectively an admission of low control, which is a far more dangerous position than the outage itself because it removes accountability for eliminating the underlying causes.

High performing environments operate with a very different assumption, which is that if something happened it was always possible, and if it was possible it should have been identified, constrained, or eliminated before it reached production scale.

5. The Real Problem Is Cultural

The reason outages are downplayed is rarely technical and almost always cultural, because calling something 10 nil introduces immediate discomfort and forces a level of honesty that many organisations are not prepared to deal with. It challenges leadership decisions, exposes prioritisation failures, and brings attention to design shortcuts and operational debt that have been tolerated over time.

To avoid that discomfort, organisations minimise and reframe, softening the language, protecting feelings, and avoiding direct statements that might create tension within teams. This behaviour is often justified as preserving morale, but in practice it protects fragility and allows the same classes of failure to repeat.

A team that cannot openly state that a failure was unacceptable will never build systems that consistently meet acceptable standards, because improvement depends on accurate problem definition and a willingness to confront reality without dilution.

6. Clients Experience the Scoreboard

Clients do not have visibility into internal operations, incident bridges, or the level of effort teams are putting in behind the scenes, and they are not concerned with the complexity being managed during an outage. What they experience is the outcome of the system in the moments that matter to them.

They experience the inability to access their money, failed transactions, and a platform that does not function when required, and from that experience they form a simple and durable judgement about whether the service can be trusted. There is no concept of partial credit in that interaction, and no recognition for near misses or rapid recovery attempts, because availability is binary from the client perspective.

From the outside, the scoreboard is the only reality that exists, and if it reads 10 nil, that is the truth that defines your brand regardless of internal narratives.

7. The 10 Nil Mindset

Adopting the 10 nil mindset requires a deliberate shift in how failures are framed, discussed, and acted upon, starting with the refusal to soften language or immediately move into defensive explanations. The scoreline must be accepted fully and explicitly before any deeper analysis begins, because clarity about the magnitude of failure is what drives meaningful change.

Once that is established, the focus shifts to eliminating entire categories of failure rather than improving reaction speed, which involves analysing blast radius, detection gaps, recovery limitations, and the fundamental question of why the system was capable of entering a failure state at all. This approach forces structural thinking instead of reactive thinking, and it drives engineering decisions that reduce risk at the source rather than managing symptoms.

The goal is not to recover better next time, but to ensure that the same class of failure cannot happen again, which is the only way to move from resilience theatre to actual reliability.

8. Leadership Is Not There to Comfort You After 10 Nil

Leadership responsibility in the context of outages is not to soften the narrative or shield teams from discomfort, but to maximise the learning extracted from failure, aggressively reduce the blast radius of future incidents, and ensure that monitoring, controls, and system design evolve faster than the risks that expose them. This requires a level of directness that can feel uncomfortable, because it involves stating clearly that an eight hour outage is unacceptable, not as a criticism of effort but as a recognition that the system should never have allowed it to occur.

Being liked is not the objective in these moments, because trust is built through consistency and honesty rather than validation, and teams ultimately respond better to clear expectations than to ambiguity. Punishing individuals is a simplistic response that avoids systemic issues, while ignoring failure entirely allows those issues to compound, so effective leadership sits in the middle by acknowledging the failure fully and driving structural change without resorting to blame.

In most environments, funding and resources are not the primary constraints on stability, because the real limitations are ambition, clarity of thought, and the willingness to confront uncomfortable truths early instead of explaining them away later. Many systems are fragile not because they lack investment, but because fragility has been normalised, which creates a culture where repeated failure becomes expected rather than eliminated.

It is entirely possible to run a significant portion of enterprise infrastructure on a cluster of Raspberry Pi devices and achieve higher uptime and durability than complex production estates, because constraints force intentional design, strong observability, and disciplined engineering practices, whereas uncontrolled complexity introduces failure modes that are difficult to detect and even harder to recover from.

Teams are not demotivated by hard truths when those truths are paired with visible progress, because psychological safety is built on clarity, transparency, and consistent follow through rather than avoidance of difficult conversations. Engineers become disengaged when failures are obscured or rationalised, but they respond positively when problems are clearly defined and systematically removed.

No team enjoys being woken up at 3am to deal with issues that should have been resolved years earlier, and those incidents are rarely random because they are the predictable outcome of accumulated neglect that eventually surfaces under load. Most teams can tolerate sustained periods of uncomfortable but necessary dialogue if they can see improvements in stability, reductions in incidents, and a tangible shift in how systems behave over time.

10 nil cannot become a normal operating condition, because once it is normalised it becomes self perpetuating, so it has to be deliberately pushed into the past and treated as a reference point for how the organisation used to operate rather than how it continues to function.

9. Design Teams Already Live This Reality

Design teams operate in a fundamentally different environment where bad outcomes are immediately visible and impossible to hide, because poor flows, confusing interactions, and broken experiences are exposed the moment a feature reaches users. Feedback arrives quickly and publicly, often through social media, and if the experience is poor there is no abstraction layer to soften the impact or reinterpret the result.

Designers are therefore conditioned to accept direct, continuous critique as part of the job, and they understand that bad design is not something to be reframed or defended but something to be acknowledged and fixed. When a feature fails from a user experience perspective, it is treated as truth rather than narrative, and the expectation is that it will be improved without delay.

Engineering should operate under the same principles, but it often does not because failure is less directly visible and can be interpreted through layers of reporting, metrics, and narrative. This creates space for organisations to normalise failure by relying on proxy indicators such as uptime percentages or aggregated downtime statistics, which can make systemic issues appear acceptable when they are not.

In environments where leadership is non technical, this tendency becomes more pronounced, because there is a natural inclination to rely on audits and formal processes to extract an understanding of system health. This is a dangerous path, because there is almost zero correlation between a clean audit and a well designed architecture. Highly resilient, well engineered systems can fail documentation and process audits, while technically bankrupt systems can pass audits with excellent scores simply by conforming to expected patterns on paper.

The only reliable way to understand the extent of architectural failure is to bring in independent and senior engineers who have the experience to interrogate systems at depth and identify the real sources of risk. These individuals are able to traverse complex and often poorly structured environments, but their effectiveness depends entirely on the honesty and cooperation of the incumbent team, because without that transparency the true shape of the system remains obscured.

More recently, there is an additional tool that can accelerate this process, which is the use of AI to analyse code repositories and surface technical debt, architectural weaknesses, and latent risks. When applied with the right context and prompting, this approach can provide a surprisingly accurate view of system health and highlight issues that would otherwise take significant time to uncover manually.

The combination of independent expertise and deep technical analysis, whether human or AI assisted, creates a far more accurate picture than audits or proxy metrics ever will, and it aligns engineering with the same reality that design teams have always operated in, where failure is visible, undeniable, and must be addressed directly.