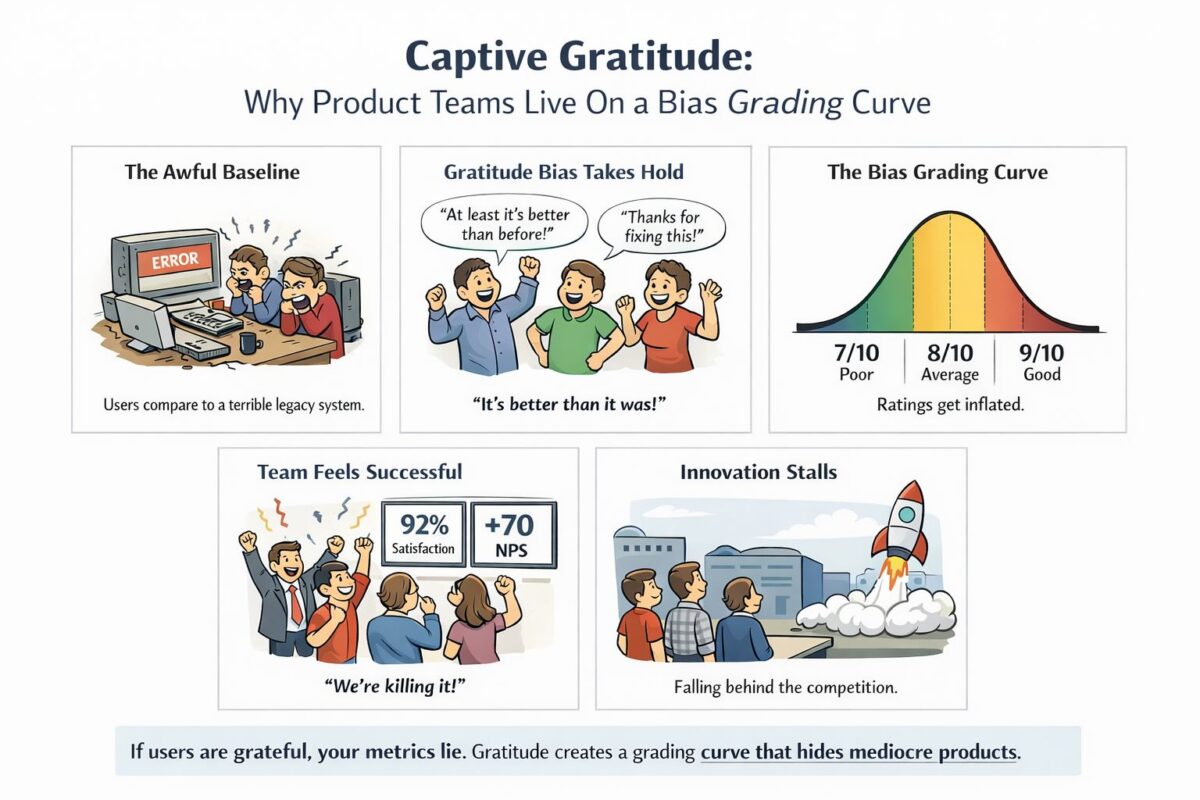

There is a product team performance bias hiding in plain sight inside every organisation that has moved to product-aligned engineering — except that it does not show up as a number on a dashboard, a flag in a talent calibration session, or a red line in an engagement survey. It accumulates quietly, year on year, in the gap between what a performance grade is supposed to mean and what a manager is actually capable of knowing. I call it captive gratitude, and once you understand the structural conditions that produce it, you will find it extraordinarily difficult to unsee.

1. The Captivity Problem

When you embed technologists inside product teams, you are making a deliberate organisational choice. You want engineers, designers, and QA professionals to feel the pull of the customer problem, to sit alongside product managers, and to be accountable to outcomes rather than outputs. That logic is sound and the commercial benefits are well documented. The unintended consequence is that your UX designer now reports to a product lead who has exactly one UX designer in their world. Your two Java engineers are the only Java engineers that product lead will ever manage. Your frontend engineer exists, from that leader’s perspective, as a singular and irreplaceable atom in a very small universe.

When appraisal season arrives, that product lead faces a structurally impossible task. They are being asked to rank and rate people for whom they have no comparative basis of evaluation. They know whether their Java engineers shipped on time. They know whether the UX designer was pleasant to work with and whether the designs looked good to them as a non-designer. What they cannot know, because they have never managed anyone else doing the same job, is whether those same people sit in the top quartile, the median, or the bottom third of their profession.

The result is captive gratitude. Because the product lead depends entirely on this one person to do this one specialised thing, they are unconsciously biased toward protecting them, affirming them, and rating them generously. This is not corruption. It is not favouritism in the traditional sense. It is the entirely rational response of a manager who knows that losing their only frontend engineer would be operationally catastrophic, and whose brain has quietly decided that the safest way to retain them is to signal, repeatedly and sincerely, that they are excellent.

2. How Dependency Inflates Ratings

The inflation that captive gratitude produces is not symmetrical across all managers, and that asymmetry is precisely what makes it so difficult to correct at an organisational level. Product heads and technology leaders bring fundamentally different distortions to the appraisal table, and when you embed technologists inside product teams, you hand the appraisal pen to the product head by default.

Product leaders tend to overrate speed and agreeableness. The engineer who shipped fast, who said yes readily, who never created friction in the standup, who made the product manager’s life easier at every turn — that person reads as excellent. The engineer who pushed back on timelines, who raised technical debt concerns before agreeing to a feature request, who occasionally slowed the team down in pursuit of something more architecturally durable — that person reads as difficult, even when they are objectively the stronger technologist. The product leader is not being dishonest. They are applying the only lens available to them, and that lens produces inflated ratings for the wrong reasons.

Technology leaders, by contrast, tend to bias toward quality and operational excellence. They notice the engineer who refactors before adding features, who writes tests that actually catch regressions six months later, who thinks carefully about the person who will be on call at two in the morning when something breaks in production. They can see the difference between code that works today and code that will continue to work as the system scales, and they weight that distinction in ways that product leaders structurally cannot.

Neither lens is complete on its own. A technology leader who ignores business outcomes is just as dangerous as a product leader who ignores technical craft. But when only the product lens is applied to a technologist’s performance review, ratings inflate for agreeableness and speed while the deeper and more durable qualities of engineering excellence go unmeasured and unrewarded. Your strongest engineers are typically the first to notice that the grading curve is rewarding the wrong things, and that observation sits with them.

3. Why Functional Pools See More Clearly

The traditional alternative to product embedding is the functional pool: all your Java engineers in one chapter, guild, or centre of excellence, managed by someone who has spent a career around Java engineers. The critique of this model is well rehearsed and largely fair. Pooled engineers can feel distant from the customer problem, optimise for technical elegance over business outcome, and become a shared services backlog that product teams grow to resent.

But functional pools have one structural capability that embedded models destroy almost entirely, and that capability is the ability to compare honestly. When a technology leader manages twelve Java engineers, they develop a clear and calibrated sense of the distribution across the cohort. They know who writes the clearest and most maintainable code, who mentors most effectively, who handles ambiguity with the most composure, and who grows the fastest when placed under genuine pressure. That comparative baseline is not a luxury or an incidental benefit of the pooled model. It is the foundational requirement for a performance conversation that is accurate, developmentally useful, and fair to the individual on the other side of the table.

Without that baseline, you are not conducting performance management. You are conducting performance theatre, where both parties go through the motions of a rigorous evaluation that was, in structural terms, impossible before it began.

4. The Rotation Prescription

The solution is not to abandon product alignment and return to functional silos. The embedded model produces real and compounding benefits — faster feedback loops, stronger product intuition in engineering, and a shared accountability for outcomes that purely pooled models rarely achieve. Reversing it wholesale would simply trade one set of problems for a different and arguably worse set. The solution is to deliberately and systematically restore the comparative lens that product embedding removes, by moving people across team boundaries in structured, lightweight, and repeated ways.

The mechanism is rotation. Not reporting line changes, not full reorganisations, and not secondments that last a year and feel to the individual like internal exile. The rotation prescription calls for short cycle, intentional swaps where your UX designer spends three weeks contributing to another product team’s project, or your senior Java engineer participates in a communities of practice initiative that places them alongside their peers from across the organisation. The rotation does not need to be full time to be effective. Two days a week for a quarter, or a single project-based assignment with clear deliverables, is sufficient to generate the comparative signal that your appraisal process is currently missing entirely.

Technology leaders have a specific and non-delegable role to play here. The rotation calendar cannot be left to goodwill negotiations between product heads, because product heads have every incentive to resist it. The technology leadership layer must own the rotation mandate, fund the communities of practice with real capacity rather than aspirational goodwill, and treat cross-team exposure as a governance requirement rather than a development gesture. Without that structural ownership, the rotation prescription will be absorbed and neutralised by delivery pressure within a single quarter.

5. What Rotation Actually Produces

When your UX designer works alongside the UX designer from another product team, both managers suddenly have something they lacked before: a reference point against which to calibrate their own instincts. When your Java engineer presents at a community of practice and fields detailed technical questions from their peers across the organisation, their technology lead sees them operating in a context that product delivery alone never provides. The comparison that results is not punitive or threatening. It is informative — for the manager, for the individual, and for the organisation’s understanding of where genuine technical strength actually lives.

The objection to rotation will be immediate and entirely predictable. Product managers will argue they cannot afford to lose their people even part time. Delivery schedules will be cited with great conviction. Committed roadmap items will be invoked as if they represent physical laws rather than negotiated plans. This objection deserves to be taken seriously and then firmly overruled, because the cost of inflated and inaccurate ratings compounds across years in ways that are far more expensive than any short rotation. The engineer who discovers through lateral exposure that their skills are genuinely in the top quartile of their profession will perform differently, develop more intentionally, and stay longer. The engineer who receives inflated ratings for the wrong reasons will eventually sense the hollowness of that feedback and seek an honest signal from the market instead.

The practical implementation requires three things working together. First, a rotation calendar owned at the technology leadership level and treated as a standing operating requirement rather than an annual aspiration. Second, communities of practice that are genuinely resourced, with time protected in capacity plans rather than relegated to something that happens after hours if people happen to feel like it. Third, appraisal processes that explicitly require input from managers and technical leads outside the individual’s home team, so that cross-team exposure is not merely a development gesture but a prerequisite for a complete and credible evaluation.

6. Cross Organisation Calibration

Another structural problem appears when organisations stop comparing technologists across the entire technology estate. Product teams often rate their engineers relative to the local environment of that team, not against the broader technical organisation. The result is predictable. A business technology team that has had a difficult year will suddenly produce a cluster of “exceptional” ratings as managers attempt to compensate for morale or prior failures, while a central platform or infrastructure team that quietly delivered reliability all year nominates only a handful of exceptional performers. Without a central technology view, these grading curves drift apart and the system loses credibility.

Technology leadership must therefore treat performance grading the same way we treat architecture standards or production reliability: as an organisation wide discipline. Cross team calibration matters because performance ratings are only meaningful when the same bar is applied everywhere. If product teams nominate twenty percent exceptional engineers after a year of instability while central teams nominate single digits after a year of operational excellence, the system is already broken. The role of central technology leadership is not to dictate outcomes but to maintain a consistent view of engineering quality across the organisation. When teams perform badly, whether they sit in product units or central platforms, that reality must arrive in the grading distribution. Otherwise the organisation quietly teaches everyone the wrong lesson: outcomes do not matter, narrative does.

7. The Cost of Getting This Wrong

The goal of everything described above is not to create instability or to undermine the coherence and velocity of product teams. It is to ensure that when you sit across from someone in their annual performance conversation and tell them where they stand, you are working from an accurate picture rather than a gratitude inflated one.

The engineers who are most harmed by this bias are rarely the ones you would expect. They are not the weaker performers, who tend to benefit from the generous comparative vacuum that captive gratitude creates. They are the strongest ones, the people who know their craft deeply enough to recognise when their evaluation does not reflect it, who are perceptive enough to understand the structural reasons why, and who have enough market optionality to act on that understanding when the frustration accumulates past a certain threshold. Captive gratitude feels kind in the moment it is expressed. In the long run, it fails the very people it is trying to protect, because it denies them the honest developmental signal they need to grow further, and it denies your organisation the accurate picture it needs to invest wisely in the people who are genuinely building its future.

Gratitude that is genuinely earned does not need to be captive. It is strong enough to survive the comparison.