Why AI Pilots Fail to Reach Production (And How to Fix It)

Most AI pilots fail to reach production because enterprises automate broken processes, accept vendor overstatements at face value, and treat pilots as success theater rather than genuine transformation exercises. Fixing this requires ruthless process redesign before automation, clear production-readiness criteria from day one, and executive accountability that extends beyond the steering committee slide deck.

Gartner says 40% of agentic AI projects will fail by 2027. I think they’re being optimistic.

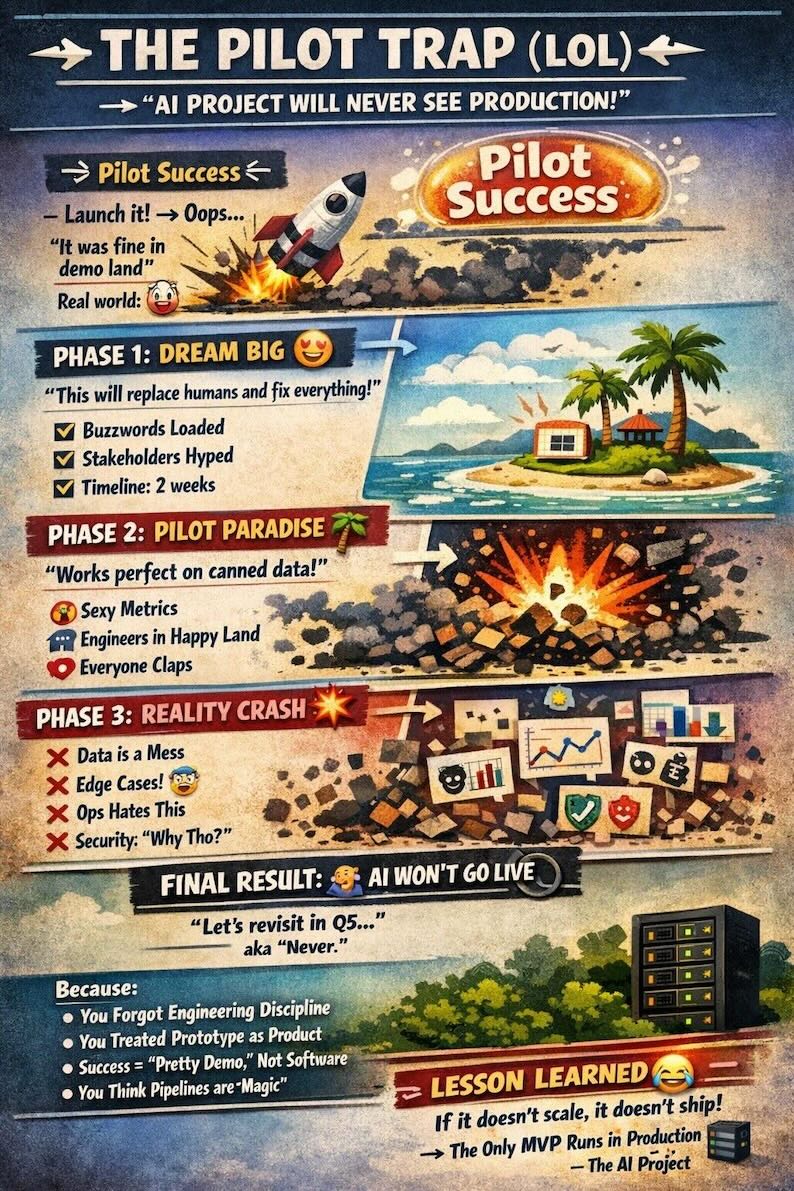

Walk into almost any large enterprise right now and you’ll find the same scene: a glossy AI pilot, a proud press release, a steering committee meeting monthly to “track progress,” and an absolutely zero percent chance that any of it ever reaches production at scale. The pilot looks great in the boardroom deck. It just never seems to cross the finish line.

This isn’t bad luck. It’s a pattern. And it’s being driven by a perfect storm of vendor hype, institutional cowardice, and the oldest mistake in enterprise IT: automating a broken process and calling it transformation.

Let’s be honest about what’s actually happening.

1. The Vendors Are Misleading You

Not maliciously. Just commercially.

Every major cloud vendor, every AI platform company, every systems integrator with a freshly minted “AI practice” is telling you the same thing: their platform makes it easy to go from pilot to production. The demos are slick. The reference architectures look clean. The case studies are compelling, carefully selected, professionally written, and almost entirely devoid of the parts where things went wrong.

What they don’t tell you is that their platform is the easy part. The hard part is your organisation. And no vendor has a product that fixes that.

The AI pilot industrial complex has a vested interest in keeping you buying. Every pilot that doesn’t reach production is a renewal conversation, a new use case to explore, another workshop to run. The meter keeps running whether you ship or not. Meanwhile your actual security posture, your actual operational efficiency, your actual competitive position, none of that improves while you’re still running proof of concepts.

I’ve seen organisations spend two years and seven figures “exploring” AI capabilities that their competitors deployed in four months and a fraction of the budget. The gap between those two organisations isn’t technical. It’s not the model, it’s not the platform, it’s not the data. It’s the decision to actually finish something.

2. Your Governance Process Is Designed to Prevent Shipping

I want to be careful here because governance matters. In a regulated industry it matters a lot. But there is a version of enterprise governance that exists not to manage risk but to distribute blame, and it is absolutely lethal to getting AI into production.

You know the signs. The steering committee that meets fortnightly but can’t make a decision without a subcommittee review. The risk framework that was written for a different era of technology and gets applied wholesale to AI systems without any attempt to calibrate it to the actual risk profile. The legal team that blocks a deployment because nobody has specifically approved this use case before, even though the underlying risk is lower than a dozen things already running in production. The architecture review board that wants to discuss whether this is the right foundational model before they’ll sign off, as if model selection is more important than shipping.

These structures aren’t protecting your organisation. They’re protecting the people inside them. There is a meaningful difference between those two things.

Real governance asks: what are the actual risks here, what controls do we need, and how do we move forward safely? Performative governance asks: who else needs to be in this meeting before anyone can be held accountable for a decision? One of those gets AI into production. The other one generates excellent meeting minutes.

The organisations that are shipping AI at speed have not abandoned governance. They’ve redesigned it to match the pace of what they’re building. They have clear ownership, tight decision rights, and a bias toward controlled production deployment over extended piloting. They treat a well-instrumented production system as better risk management than an endlessly extended POC, because it is. You learn more about real risks from running something in production with proper monitoring than you ever will from a sandbox.

3. You’re Automating the Wrong Thing

This one is the most uncomfortable, because it’s an internal failure rather than something you can blame on a vendor or a governance committee.

The single most common reason AI pilots don’t reach production is that they were solving the wrong problem to begin with. Someone identified a process that looked automatable, stood up a pilot, got impressive demo results, and then discovered that the process was never well-defined enough to actually run without constant human intervention. Or the edge cases, which are trivial for a human and catastrophic for an agent, turn out to represent 30% of real-world volume. Or the data that looked clean in the pilot environment is a mess in production. Or the workflow the agent was designed for hasn’t been the actual workflow for six months, because it was already informally replaced by something else and nobody updated the documentation.

AI agents are brutally good at exposing process debt. Every vague step, every undocumented exception, every “we just know” piece of institutional knowledge, the agent will find it, fail on it, and wait for a human to tell it what to do. If your process isn’t clean before you automate it, you’re not building an AI system. You’re building an extremely expensive way to discover that your process is broken.

The pilots that work are built on processes that someone has already done the hard work of defining clearly. Not processes that seem like they should be automatable, but processes that actually are, because someone sat down and mapped every step, every exception, every decision point, before a single line of agent code was written.

At Capitec, the AI systems we’ve shipped into production weren’t picked because they were exciting. They were picked because the underlying process was well understood, the success criteria were unambiguous, and we knew exactly what good looked like before we started building. Boring criteria. Effective filter.

4. What Targeting Production Actually Looks Like

We made a deliberate choice to target production assets, not sandboxes. Not “innovation labs.” Not proof of concepts that live forever in a demo environment. Production assets. Real systems. Real clients.

We run realtime pen testing against our Cloudflare APIs in production, including chaining of API calls to test attack sequences the way an actual adversary would construct them, not just isolated endpoint checks. We do UX regression testing across thousands of mobile device configurations using Playwright MC, BrowserStack and Claude. So we know with “confidence” when a release breaks something on a real device in the real world before a client finds it. We scan app telemetry in realtime when a client calls in, so the call center agent who picks up your call, knows before they say hello what the problem on the account is likely to be and what to do about it. The client experience changes completely when the person helping you already understands your situation.

None of this is exotic technology. All of it required a genuine commitment to integrating AI into the way we actually deliver products, not the way we talk about delivering products. We had to change our entire persistance architecture to support realtime read offloading to all the AI framework realtime access to production data without blocking write traffic.

That is the distinction most organisations are missing. They are treating AI as a capability to be evaluated, when it is actually a structural change to how you build and operate. You don’t add AI to your existing delivery model and get the benefit. You have to reset how you work, how your teams are organised, how your processes run, and how your people think about what they’re building. That reset is uncomfortable. It requires people to let go of patterns that have worked for years. It requires leaders to be genuinely open to operating differently, not just open to the idea of it in principle.

5. The Cost of Staying in Pilot

Here’s what the pilot forever strategy is actually costing you, in concrete terms.

Every month your AI security tooling stays in pilot is another month your security team is doing manually what could be running continuously and automatically. Every endpoint not being continuously tested is a potential gap in your posture. Every compromised client device that takes hours to detect instead of seconds is a window where real money can move.

The competitive arithmetic is straightforward and it isn’t in your favour. The organisations that shipped six months ago are now running second generation systems, refining models on production data, building operational muscle around how to work with AI agents effectively. You’re still in the steering committee meeting. The gap isn’t staying constant. It’s compounding.

There’s also a talent cost that doesn’t appear on any project budget. Your best engineers know the difference between an organisation that ships and one that pilots. They are watching. The ones who want to build real systems, and those are exactly the ones you most want to keep, will eventually conclude that they can build more interesting things somewhere else. A culture of perpetual piloting is a slow way to lose the people who would have helped you get out of it.

And there is a credibility cost. Every AI initiative that gets announced, piloted, and quietly shelved makes the next one harder to fund, harder to staff, and harder to get through governance. You are spending credibility you will eventually need.

6. What Actually Gets You to Production

Stop piloting things you’re not committed to shipping. This sounds obvious. It isn’t, apparently.

Before you start a pilot, answer three questions with actual specificity. What does production look like, what system, what scale, what integration points, what go live date? What would cause you not to ship, name the actual criteria, not vague concerns about risk? Who owns the production decision and what do they need to see to make it?

If you can’t answer those questions before you start, you don’t have a pilot. You have a research project with a vendor’s billing address attached to it.

Fix your governance before you start your next pilot, not during it. Define who makes the production decision. Define what they need to see. Define the timeline. Write it down before anyone writes a line of code. If your governance process can’t accommodate a production decision in under three months for a well scoped AI system, the governance process is the problem.

And be honest with yourself about whether you’re in pilot because the technology isn’t ready or because your organisation isn’t ready. Those are different problems. The first one has a technical solution. The second one requires someone with authority to make a decision that probably makes some people uncomfortable.

Washing your AI capability through governance theatre and letting it degrade into RPA 2.0 is not risk management. It’s a choice to waste one of the most significant technological shifts in a generation. There is an IP goldmine sitting inside every organisation that has real data, real processes, and real clients. Most are burying it under committee reviews and vendor dependency.

AI is not a toy. It is not a vendor’s gift. It is not a feature you add to your product. It is a structural change to the way you build and deliver. Until you understand that and reset accordingly, you will keep piloting. You will keep presenting. And your competitors who figured it out will keep shipping.

Go study. Go deliver.

Andrew Baker is CIO at Capitec Bank. He writes about enterprise architecture, cloud infrastructure, banking technology, and the gap between how technology is talked about and how it actually gets built.