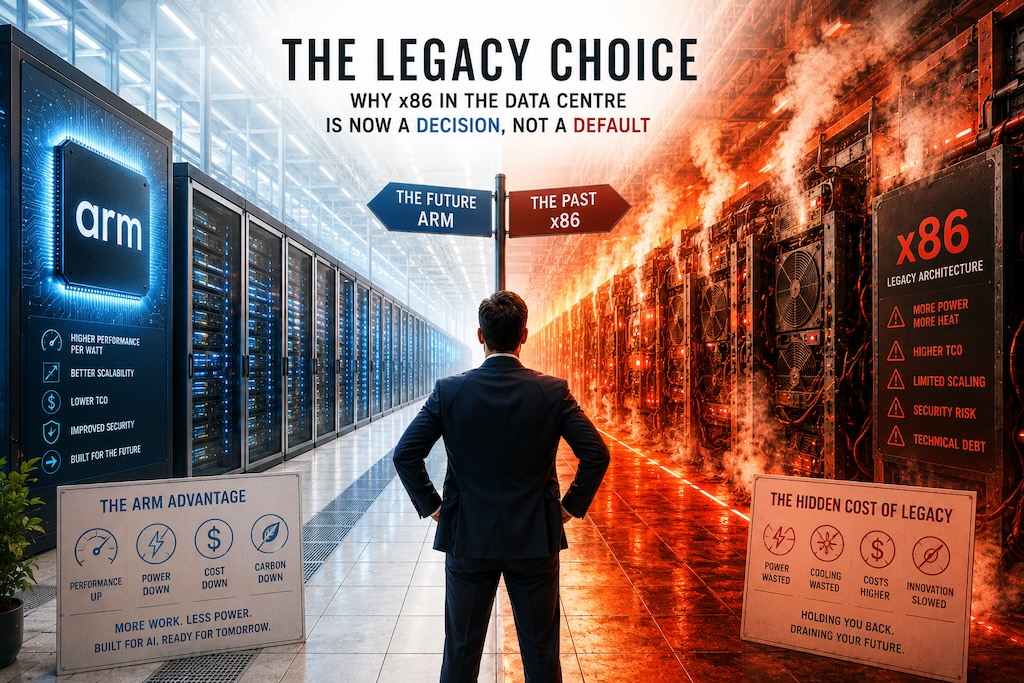

The Legacy Choice: Why x86 in the Data Centre Is Now an Expensive Decision, Not a Default

Choosing x86 server architecture in modern data centres now carries measurable cost premiums over ARM-based alternatives like AWS Graviton or Ampere Altra, particularly in energy consumption, licensing density, and performance-per-watt metrics. What was once the only viable enterprise option has become an active financial decision requiring justification, as competitive alternatives have matured sufficiently to handle most production workloads reliably.

The economics crossed the tipping point quietly. Most enterprises missed it. The ones who noticed are not going back.

1. How Defaults Become Legacy

Every technology that eventually becomes legacy spent years being the obvious choice. Nobody chose x86 over anything. It was simply there, it worked, the tooling was built around it, the hiring pipelines assumed it, the procurement processes were calibrated to it, and the vendors who sold it were very good at making sure it stayed that way. Inertia is not a conspiracy. It is just what happens when switching costs are real and the status quo is good enough.

The problem is that “good enough” is a moving threshold. When something better emerges, the gap between the default and the alternative starts narrow and widens over time. For a while the switching costs dominate and the rational choice is to stay put. Then the gap crosses a threshold where the cost of staying exceeds the cost of switching, and from that point forward every month you spend on the old platform is a month you are paying a premium for a decision you are not consciously making. The default has become the legacy choice, and you are funding it whether or not you realise it.

That threshold, for x86 in the data centre, has been crossed. The question for any engineering team running workloads on Intel or AMD today is not whether ARM is better. The benchmarks settled that argument some time ago. The question is why you have not moved yet, and whether the answer is a reason or a rationalisation.

2. What the Benchmarks Actually Show

AWS Graviton has been publishing price to performance data since Graviton2 launched in 2020, and the trajectory is consistent and steep. Graviton3, launched in 2022, delivered approximately 25 percent better compute performance than equivalent x86 instances at the same price point, with 60 percent less energy consumption per operation. Graviton4, available from 2024, extended that lead further across general purpose, compute optimised, and memory optimised workload classes.

Microsoft’s Cobalt 100 ARM processor, built on Arm Neoverse N2 architecture and available on Azure from 2024, delivers up to 40 percent better price to performance than comparable x86 instances for scale-out workloads. Google’s Axion processor, also Neoverse N2 based, shows similar gains across web serving, containerised applications, and data processing pipelines.

These are not marginal improvements. A 25 to 40 percent improvement in price to performance, sustained across three processor generations and replicated independently by three of the four largest cloud providers, is a structural shift. It is not a benchmark artefact. It is not cherry picked workload selection. It is what happens when an architecture designed from the ground up for energy efficiency and parallel throughput competes against one that was designed for single threaded desktop performance in the 1970s and has been accumulating compatibility debt ever since.

The Apple M series chips, which run the same ARM instruction set, have made this argument viscerally obvious to anyone who bought a MacBook Pro in the last three years. The performance per watt gap is not subtle. Engineers who develop on Apple Silicon and deploy to x86 cloud instances are already experiencing the architecture mismatch as a daily friction, even if their organisations have not yet named it as a strategic problem.

3. What Intel and AMD Are Not Telling You

Intel’s response to ARM competition has been a sustained exercise in reframing. The argument shifted from “x86 is faster” to “x86 is more compatible” to “the ecosystem matters” to “our next generation will close the gap.” Each of these arguments has some merit in isolation and none of them address the underlying reality, which is that the x86 instruction set is a liability that Intel and AMD are both paying an enormous engineering tax to maintain.

The x86 instruction set was designed for the 8086 processor, released in 1978. Everything built on top of it since then has had to maintain backward compatibility with 48 years of architectural decisions made for hardware that no longer exists. The x86 decode stage, the part of the processor that translates x86 instructions into the internal microoperations the chip actually executes, consumes a significant fraction of die area and power budget just to handle the translation layer that the compatibility requirement demands. ARM does not have this problem. The instruction set was designed for efficiency from the beginning and has been evolved with that constraint in mind throughout its history.

Intel’s Lunar Lake architecture, released in 2024, made genuine progress on power efficiency, particularly for laptop form factors. AMD’s Zen 5 is a strong architecture. Neither of them has closed the gap with ARM on price to performance in cloud workloads, and neither of them has a credible path to doing so while maintaining x86 compatibility, because the compatibility requirement is the constraint. They are optimising within a design space that ARM is not operating in.

The vendor lock-in argument, which Intel and AMD both benefit from and neither openly acknowledges, is that the x86 ecosystem creates dependency. The software, the tooling, the hiring assumptions, the vendor relationships, all of it is calibrated to x86 and switching requires work. This is true. It is also a description of switching costs, not a description of technical merit, and conflating the two is how the legacy choice persists long past the point where it makes economic sense.

4. The Switching Cost Illusion

The switching cost argument deserves serious treatment because it is not entirely wrong. Porting workloads to ARM requires recompilation, testing, and in some cases refactoring. Libraries that rely on x86 specific intrinsics need attention. Third party software dependencies need ARM compatible versions. None of this is free.

But the switching cost argument, as typically deployed in enterprise architecture discussions, has two serious flaws. The first is that it is usually estimated by people who have never actually done the migration and are therefore extrapolating from fear rather than experience. The genuine experience of teams that have migrated workloads to Graviton or Cobalt is that the effort is substantially smaller than anticipated. Most compiled languages, Go, Rust, Java, Python, recompile cleanly for ARM with no code changes. Container images built for ARM are now standard across all major registries. The AWS migration guide for Graviton lists a remarkably short set of workloads that require meaningful porting effort, and most enterprise application stacks are not on that list.

The second flaw is that the switching cost argument is applied asymmetrically. Organisations that carefully estimate the cost of migrating to ARM rarely apply the same rigour to estimating the ongoing cost of staying on x86. If ARM delivers 30 percent better price to performance on a workload that costs one million dollars per year to run, the cost of staying on x86 is three hundred thousand dollars per year. A migration that takes three months of engineering effort and costs two hundred thousand dollars in total pays for itself in eight months and delivers positive return every month thereafter. This calculation is obvious once you make it. Most organisations never make it because the cost of staying is invisible and the cost of switching is concrete.

5. The Architecture Determines the Ceiling

There is a deeper argument here that goes beyond individual workload economics, and it is the one that should matter most to engineers trying to make the case upward.

The performance ceiling of a workload on x86 is determined partly by the application architecture and partly by the instruction set overhead that the processor cannot avoid. No amount of application optimisation removes the x86 decode tax. No amount of tuning changes the fact that x86 processors are burning power on backward compatibility that produces no useful work for your application. The ceiling is lower than it needs to be and it is lower by design, specifically by the design decision to maintain compatibility with hardware from 1978.

ARM’s ceiling is higher and it scales differently. The Neoverse N2 architecture that underlies both Cobalt and Axion is designed specifically for cloud scale workloads, with hardware support for memory tagging, pointer authentication, and cryptographic acceleration that reduces the software overhead for security sensitive applications. Financial services workloads, which are almost always security sensitive and frequently cryptography heavy, benefit disproportionately from this. The TLS termination cost, the encryption cost at rest and in transit, the signing and verification cost across service boundaries, all of these are lower on ARM not because the software is better written but because the hardware is doing more of the work in silicon.

The question for a CIO approving a multi year infrastructure investment is not “is ARM better today.” The question is “where does this trajectory go over the investment horizon.” Intel and AMD have been trying to close the ARM efficiency gap for four years and have not closed it. The companies designing ARM chips for cloud workloads, AWS, Microsoft, Google, Ampere, are not standing still. The trajectory is not ambiguous.

6. What the Holdouts Are Actually Saying

When engineering teams propose ARM migration and encounter resistance from architecture review boards, procurement committees, or infrastructure leadership, the objections almost always fall into one of four categories.

The first is the compatibility argument: some of our software does not run on ARM. This is often true for a small subset of workloads and is almost never true for the majority. The correct response is to identify the specific workloads that have genuine compatibility constraints, migrate everything else, and treat the constrained workloads as a separate problem with a separate timeline.

The second is the support argument: our vendors do not fully support ARM. This was partially true in 2021 and is largely false in 2025. Every major enterprise software vendor with cloud ambitions has ARM support. The ones that do not are signalling something important about their investment in the platform, and that signal should inform your vendor evaluation independently of the ARM question.

The third is the skills argument: our teams know x86 and do not know ARM. This is a training problem, not an architecture problem, and it is a training problem with a solution that takes weeks rather than years. The ARM developer experience for cloud workloads is not meaningfully different from the x86 developer experience for the vast majority of application development work. The differences are at the systems level and the infrastructure level, which means they affect a small subset of your engineering population.

The fourth is the risk argument: we have production systems running on x86 and migration carries risk. This is true and it is also an argument for planning the migration carefully, not an argument against migration. Every technology refresh carries migration risk. The question is whether the risk of migrating is greater than the ongoing cost of not migrating, and for most workloads at current price to performance differentials, it is not.

What none of these arguments address is the question that should be driving the conversation: what is the business case for staying on x86? Not “what are the risks of leaving” but “what is the positive argument for remaining.” If the best case for x86 is that migration is hard and things are working well enough, then you are describing a default, not a decision. And defaults, at this point in the ARM trajectory, are costing money every month they persist.

7. Where This Goes

The cloud providers building their own ARM silicon are not doing it as an experiment. AWS has committed to Graviton as the default recommendation for new workloads across its entire compute portfolio. Microsoft has committed to Cobalt. Google has committed to Axion. These are multi billion dollar capital commitments to an architecture that the same organisations were running on Intel and AMD chips five years ago. They moved because the economics demanded it, and they moved at a scale that makes the transition irreversible.

The x86 data centre is not going away immediately. Intel and AMD will sell chips for years, particularly into workloads with genuine compatibility requirements, legacy software estates, and on premises infrastructure that cannot be migrated on a cloud timeline. But the growth is on ARM. The investment is on ARM. The price to performance trajectory is on ARM. The engineers building new systems today who choose x86 because it is the default are making the same category of decision as the engineers who chose spinning disk over SSD in 2015 because that was what the procurement catalogue offered.

The legacy choice is visible in hindsight. The point of this argument is to make it visible now, while the switching costs are manageable and the performance differential is wide enough to fund the migration several times over. Your x86 bill is a subsidy you are paying to Intel and AMD for the privilege of not making a decision. The question is how long you intend to keep paying it.

Andrew Baker is Group CIO at Capitec Bank and former Director of Engineering for EC2 at AWS. He writes about technology leadership, cloud infrastructure, and organisational design at andrewbaker.ninja and on Substack at @futureherman.